Unanswered Questions Into Deepseek Revealed

페이지 정보

본문

![]() This week kicks off a sequence of tech companies reporting earnings, so their response to the DeepSeek stunner may lead to tumultuous market movements in the times and weeks to come back. "The bottom line is the US outperformance has been pushed by tech and the lead that US corporations have in AI," Lerner said. That dragged down the broader inventory market, as a result of tech stocks make up a significant chunk of the market - tech constitutes about 45% of the S&P 500, in line with Keith Lerner, analyst at Truist. Be sure to only install the official Continue extension. Choose a DeepSeek mannequin for your assistant to start the dialog. LobeChat is an open-supply giant language model dialog platform dedicated to making a refined interface and glorious user expertise, supporting seamless integration with DeepSeek models. What the brokers are made from: As of late, greater than half of the stuff I write about in Import AI entails a Transformer architecture model (developed 2017). Not right here! These agents use residual networks which feed into an LSTM (for reminiscence) after which have some fully linked layers and an actor loss and MLE loss. The most recent version, DeepSeek-V2, has undergone vital optimizations in structure and performance, with a 42.5% reduction in coaching prices and a 93.3% discount in inference prices.

This week kicks off a sequence of tech companies reporting earnings, so their response to the DeepSeek stunner may lead to tumultuous market movements in the times and weeks to come back. "The bottom line is the US outperformance has been pushed by tech and the lead that US corporations have in AI," Lerner said. That dragged down the broader inventory market, as a result of tech stocks make up a significant chunk of the market - tech constitutes about 45% of the S&P 500, in line with Keith Lerner, analyst at Truist. Be sure to only install the official Continue extension. Choose a DeepSeek mannequin for your assistant to start the dialog. LobeChat is an open-supply giant language model dialog platform dedicated to making a refined interface and glorious user expertise, supporting seamless integration with DeepSeek models. What the brokers are made from: As of late, greater than half of the stuff I write about in Import AI entails a Transformer architecture model (developed 2017). Not right here! These agents use residual networks which feed into an LSTM (for reminiscence) after which have some fully linked layers and an actor loss and MLE loss. The most recent version, DeepSeek-V2, has undergone vital optimizations in structure and performance, with a 42.5% reduction in coaching prices and a 93.3% discount in inference prices.

Register with LobeChat now, combine with DeepSeek API, and experience the latest achievements in artificial intelligence expertise. US stocks dropped sharply Monday - and chipmaker Nvidia misplaced practically $600 billion in market worth - after a surprise advancement from a Chinese artificial intelligence company, DeepSeek, threatened the aura of invincibility surrounding America’s technology trade. Meta (META) and Alphabet (GOOGL), Google’s guardian company, had been additionally down sharply. DeepSeek, a one-yr-previous startup, revealed a gorgeous functionality last week: It offered a ChatGPT-like AI mannequin referred to as R1, which has all of the familiar abilities, operating at a fraction of the price of OpenAI’s, Google’s or Meta’s widespread AI fashions. SGLang additionally supports multi-node tensor parallelism, enabling you to run this model on a number of network-related machines. Supports integration with virtually all LLMs and maintains high-frequency updates. Closed SOTA LLMs (GPT-4o, Gemini 1.5, Claud 3.5) had marginal improvements over their predecessors, generally even falling behind (e.g. GPT-4o hallucinating greater than previous versions).

Register with LobeChat now, combine with DeepSeek API, and experience the latest achievements in artificial intelligence expertise. US stocks dropped sharply Monday - and chipmaker Nvidia misplaced practically $600 billion in market worth - after a surprise advancement from a Chinese artificial intelligence company, DeepSeek, threatened the aura of invincibility surrounding America’s technology trade. Meta (META) and Alphabet (GOOGL), Google’s guardian company, had been additionally down sharply. DeepSeek, a one-yr-previous startup, revealed a gorgeous functionality last week: It offered a ChatGPT-like AI mannequin referred to as R1, which has all of the familiar abilities, operating at a fraction of the price of OpenAI’s, Google’s or Meta’s widespread AI fashions. SGLang additionally supports multi-node tensor parallelism, enabling you to run this model on a number of network-related machines. Supports integration with virtually all LLMs and maintains high-frequency updates. Closed SOTA LLMs (GPT-4o, Gemini 1.5, Claud 3.5) had marginal improvements over their predecessors, generally even falling behind (e.g. GPT-4o hallucinating greater than previous versions).

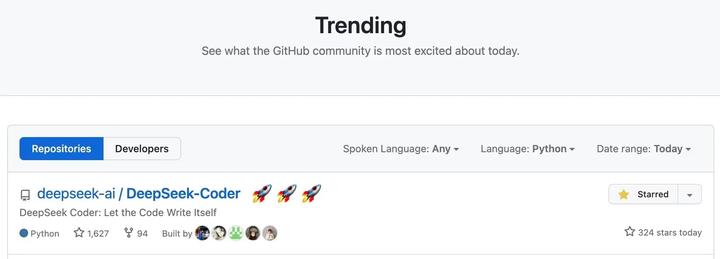

A spate of open source releases in late 2024 put the startup on the map, including the massive language model "v3", which outperformed all of Meta's open-source LLMs and rivaled OpenAI's closed-supply GPT4-o. Mixture of Experts (MoE) Architecture: DeepSeek-V2 adopts a mixture of consultants mechanism, allowing the model to activate only a subset of parameters during inference. "In the primary stage, two separate consultants are trained: one which learns to stand up from the bottom and another that learns to attain against a hard and fast, random opponent. Some experts concern that the federal government of China could use the A.I. However the U.S. authorities appears to be growing cautious of what it perceives as harmful international affect. The upshot: the U.S. So, what's DeepSeek and what may it imply for U.S. As these newer, export-controlled chips are increasingly utilized by U.S. Which means DeepSeek was able to achieve its low-value model on under-powered AI chips. This code repository and the model weights are licensed below the MIT License.

Whether in code era, mathematical reasoning, or multilingual conversations, deepseek ai china supplies wonderful performance. Having CPU instruction sets like AVX, AVX2, AVX-512 can additional enhance performance if out there. Pretty good: They train two sorts of model, a 7B and a 67B, then they compare performance with the 7B and 70B LLaMa2 fashions from Facebook. The corporate followed up with the discharge of V3 in December 2024. V3 is a 671 billion-parameter model that reportedly took lower than 2 months to prepare. For the uninitiated, FLOP measures the amount of computational power (i.e., compute) required to prepare an AI system. Crucially, ATPs improve energy effectivity since there's much less resistance and capacitance to beat. This not only improves computational effectivity but in addition considerably reduces training costs and inference time. This significantly reduces memory consumption. Multi-Head Latent Attention (MLA): This novel consideration mechanism reduces the bottleneck of key-worth caches throughout inference, enhancing the model's skill to handle lengthy contexts. DeepSeek is a strong open-source giant language model that, by the LobeChat platform, allows customers to totally utilize its benefits and enhance interactive experiences. DeepSeek is a complicated open-source Large Language Model (LLM).

If you liked this posting and you would like to acquire a lot more info pertaining to deep seek kindly visit our page.

- 이전글당일배송【홈: va66.top】비아그라 구매 아드레닌복용법 25.02.01

- 다음글Discovering the Baccarat Site: Scam Verification with Casino79 25.02.01

댓글목록

등록된 댓글이 없습니다.