Who's Deepseek?

페이지 정보

본문

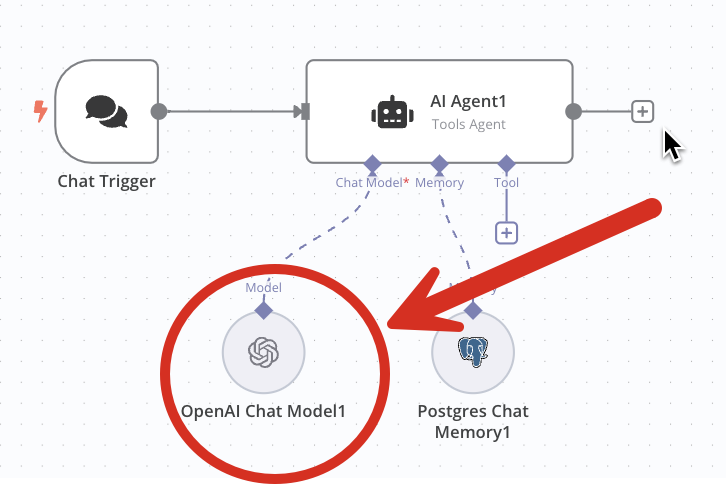

KEY setting variable with your DeepSeek API key. API. It's also production-prepared with assist for caching, fallbacks, retries, timeouts, loadbalancing, and will be edge-deployed for minimum latency. We already see that trend with Tool Calling models, nonetheless if in case you have seen latest Apple WWDC, you'll be able to think of usability of LLMs. As we've seen throughout the weblog, it has been really exciting times with the launch of those 5 powerful language fashions. In this blog, we'll discover how generative AI is reshaping developer productivity and redefining all the software program improvement lifecycle (SDLC). How Generative AI is impacting Developer Productivity? Over time, I've used many developer instruments, developer productivity instruments, and normal productivity tools like Notion and many others. Most of these instruments, have helped get better at what I needed to do, brought sanity in several of my workflows. Smarter Conversations: LLMs getting better at understanding and responding to human language. Imagine, I've to rapidly generate a OpenAPI spec, as we speak I can do it with one of many Local LLMs like Llama using Ollama. Turning small models into reasoning fashions: "To equip extra environment friendly smaller fashions with reasoning capabilities like DeepSeek-R1, we straight tremendous-tuned open-supply fashions like Qwen, and Llama utilizing the 800k samples curated with DeepSeek-R1," deepseek ai china write.

Detailed Analysis: Provide in-depth monetary or technical analysis utilizing structured information inputs. Coming from China, DeepSeek's technical innovations are turning heads in Silicon Valley. Today, they are large intelligence hoarders. Nvidia has introduced NemoTron-4 340B, a family of models designed to generate artificial knowledge for coaching giant language fashions (LLMs). Another significant benefit of NemoTron-4 is its optimistic environmental affect. NemoTron-4 also promotes fairness in AI. Click right here to entry Mistral AI. Listed here are some examples of how to use our model. And as advances in hardware drive down costs and algorithmic progress increases compute efficiency, smaller fashions will increasingly access what are actually thought-about dangerous capabilities. In other words, you're taking a bunch of robots (right here, some relatively simple Google bots with a manipulator arm and eyes and mobility) and provides them entry to a giant mannequin. DeepSeek LLM is a complicated language model obtainable in both 7 billion and 67 billion parameters. Let be parameters. The parabola intersects the line at two factors and . The paper attributes the model's mathematical reasoning abilities to two key factors: leveraging publicly out there web knowledge and introducing a novel optimization approach called Group Relative Policy Optimization (GRPO).

Detailed Analysis: Provide in-depth monetary or technical analysis utilizing structured information inputs. Coming from China, DeepSeek's technical innovations are turning heads in Silicon Valley. Today, they are large intelligence hoarders. Nvidia has introduced NemoTron-4 340B, a family of models designed to generate artificial knowledge for coaching giant language fashions (LLMs). Another significant benefit of NemoTron-4 is its optimistic environmental affect. NemoTron-4 also promotes fairness in AI. Click right here to entry Mistral AI. Listed here are some examples of how to use our model. And as advances in hardware drive down costs and algorithmic progress increases compute efficiency, smaller fashions will increasingly access what are actually thought-about dangerous capabilities. In other words, you're taking a bunch of robots (right here, some relatively simple Google bots with a manipulator arm and eyes and mobility) and provides them entry to a giant mannequin. DeepSeek LLM is a complicated language model obtainable in both 7 billion and 67 billion parameters. Let be parameters. The parabola intersects the line at two factors and . The paper attributes the model's mathematical reasoning abilities to two key factors: leveraging publicly out there web knowledge and introducing a novel optimization approach called Group Relative Policy Optimization (GRPO).

Llama three 405B used 30.8M GPU hours for training relative to DeepSeek V3’s 2.6M GPU hours (extra information within the Llama 3 model card). Generating synthetic knowledge is more useful resource-efficient compared to conventional coaching methods. 0.9 per output token compared to GPT-4o's $15. As builders and enterprises, pickup Generative AI, I only anticipate, more solutionised models in the ecosystem, could also be more open-source too. However, with Generative AI, it has change into turnkey. Personal Assistant: Future LLMs may be capable of handle your schedule, remind you of essential events, and even show you how to make selections by offering helpful data. This model is a mix of the spectacular Hermes 2 Pro and Meta's Llama-three Instruct, leading to a powerhouse that excels typically tasks, conversations, and even specialised functions like calling APIs and producing structured JSON knowledge. It helps you with common conversations, finishing specific duties, or dealing with specialised capabilities. Whether it's enhancing conversations, producing inventive content, or offering detailed evaluation, these fashions really creates an enormous affect. It also highlights how I expect Chinese corporations to deal with things just like the influence of export controls - by building and refining environment friendly methods for doing large-scale AI coaching and sharing the details of their buildouts overtly.

At Portkey, we're helping builders constructing on LLMs with a blazing-fast AI Gateway that helps with resiliency features like Load balancing, fallbacks, semantic-cache. A Blazing Fast AI Gateway. The reward for free deepseek-V2.5 follows a still ongoing controversy around HyperWrite’s Reflection 70B, which co-founder and CEO Matt Shumer claimed on September 5 was the "the world’s top open-supply AI mannequin," according to his inner benchmarks, only to see these claims challenged by impartial researchers and the wider AI analysis community, who have thus far failed to reproduce the stated outcomes. There’s some controversy of DeepSeek coaching on outputs from OpenAI models, which is forbidden to "competitors" in OpenAI’s phrases of service, but that is now harder to show with what number of outputs from ChatGPT are actually usually obtainable on the net. Instead of merely passing in the present file, the dependent information within repository are parsed. This repo comprises GGUF format mannequin recordsdata for DeepSeek's deepseek (click the next internet site) Coder 1.3B Instruct. Step 3: Concatenating dependent recordsdata to type a single example and make use of repo-level minhash for deduplication. Downloaded over 140k occasions in every week.

At Portkey, we're helping builders constructing on LLMs with a blazing-fast AI Gateway that helps with resiliency features like Load balancing, fallbacks, semantic-cache. A Blazing Fast AI Gateway. The reward for free deepseek-V2.5 follows a still ongoing controversy around HyperWrite’s Reflection 70B, which co-founder and CEO Matt Shumer claimed on September 5 was the "the world’s top open-supply AI mannequin," according to his inner benchmarks, only to see these claims challenged by impartial researchers and the wider AI analysis community, who have thus far failed to reproduce the stated outcomes. There’s some controversy of DeepSeek coaching on outputs from OpenAI models, which is forbidden to "competitors" in OpenAI’s phrases of service, but that is now harder to show with what number of outputs from ChatGPT are actually usually obtainable on the net. Instead of merely passing in the present file, the dependent information within repository are parsed. This repo comprises GGUF format mannequin recordsdata for DeepSeek's deepseek (click the next internet site) Coder 1.3B Instruct. Step 3: Concatenating dependent recordsdata to type a single example and make use of repo-level minhash for deduplication. Downloaded over 140k occasions in every week.

- 이전글5 Clarifications On The Swedish Transport Agency Renews Driving Licences 25.02.01

- 다음글Seven Components That Affect Billiards Libre 25.02.01

댓글목록

등록된 댓글이 없습니다.