Increase Your Try Chat Gbt With The following tips

페이지 정보

본문

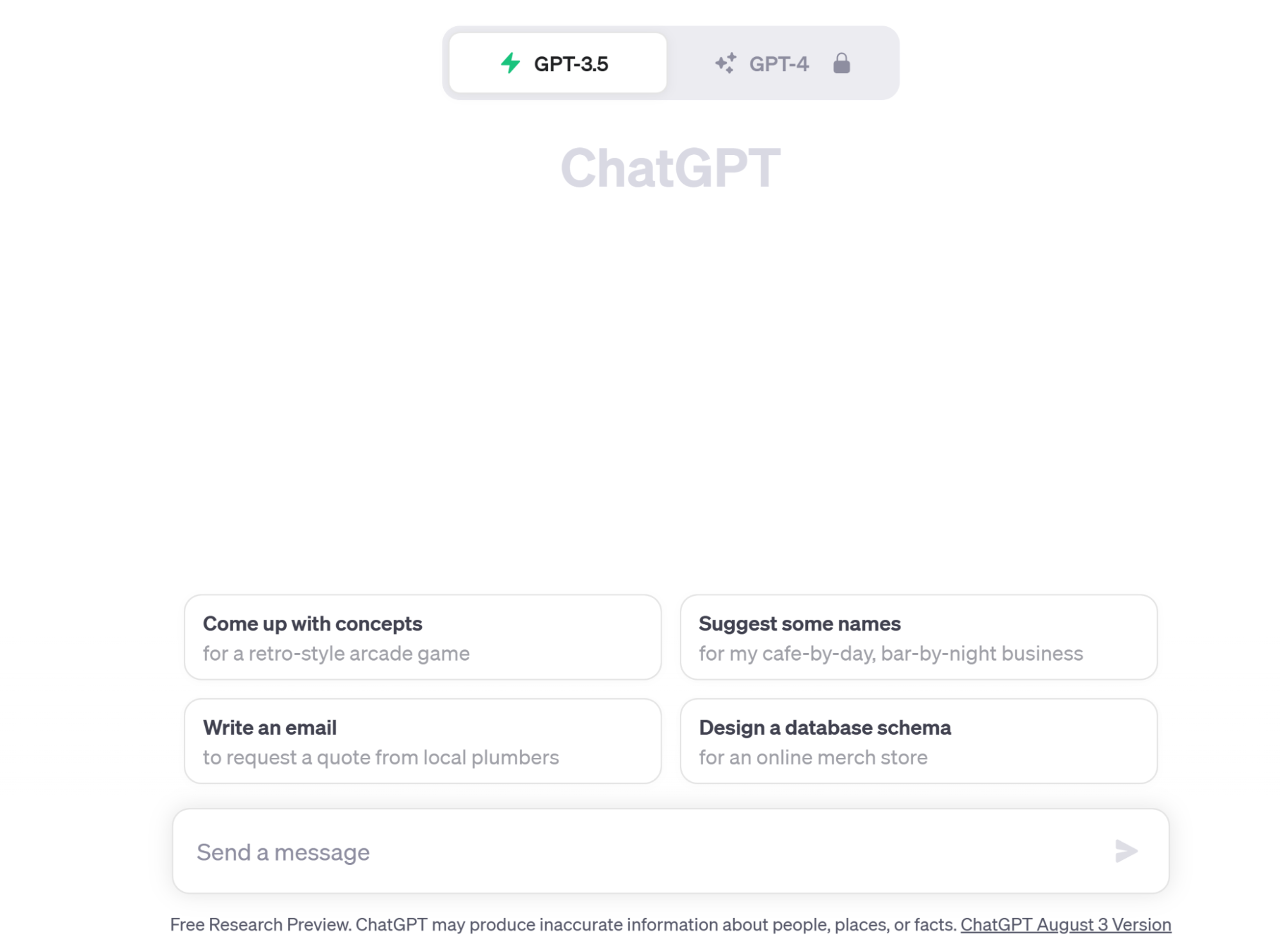

He posted it on a Discord server on 15 January 2023, which is almost certainly straight after it was created. You can learn in regards to the supported fashions and the way to start the LLM server. This warning indicates that there were no API server IP addresses listed in storage, causing the removal of previous endpoints from the Kubernetes service to fail. GPT-4o and GPT-4o-mini has 128k tokens context window so it appears to be fairly giant however creating a complete backend service with free gpt-4o as an alternative of business logic would not seem like an affordable thought. That is how a typical operate calling situation seems to be like with a easy device or perform. I will present you a easy example on how to attach Ell to OpenAI to use gpt try. The quantity of information available for the model was only dependent on me since the API can handle 128 features, more than sufficient for most use cases. The tool can write new Seo-optimized content and also enhance any present content material.

He posted it on a Discord server on 15 January 2023, which is almost certainly straight after it was created. You can learn in regards to the supported fashions and the way to start the LLM server. This warning indicates that there were no API server IP addresses listed in storage, causing the removal of previous endpoints from the Kubernetes service to fail. GPT-4o and GPT-4o-mini has 128k tokens context window so it appears to be fairly giant however creating a complete backend service with free gpt-4o as an alternative of business logic would not seem like an affordable thought. That is how a typical operate calling situation seems to be like with a easy device or perform. I will present you a easy example on how to attach Ell to OpenAI to use gpt try. The quantity of information available for the model was only dependent on me since the API can handle 128 features, more than sufficient for most use cases. The tool can write new Seo-optimized content and also enhance any present content material.

Each prompt and gear is represented as Python function and the database keep tracks of functions' signature and implementation adjustments. We'll print out the results of actual values instantly computed by Python and the outcomes made by the mannequin. Ell is a quite new Python library that is much like LangChain. Assuming you could have Python3 with venv put in globally, we will create a new virtual setting and install ell. This makes Ell an ultimate tool for prompt engineering. In this tutorial, we'll build an AI text humanizer tool that may convert AI-generated textual content into human-like text. Reports on totally different matters in a number of areas could be generated. Users can copy the generated summary in markdown. This way we will ask the model to check two numbers that might be embedded contained in the sin function or every other we provide you with. What the mannequin is capable of depends in your implementation.

CopilotKit offers two hooks that allow us to handle consumer's request and plug into the applying state: useCopilotAction and useMakeCopilotReadable. I will give my software at most 5 loops until it would print an error. I'll just print the results and let you examine if they are appropriate. Depending on the mood and temperature, mannequin will understand

- 이전글Using Try Gpt Chat 25.02.12

- 다음글Get 50 Free Spins & Bingo, Deposit £10 25.02.12

댓글목록

등록된 댓글이 없습니다.