6 Tips For Deepseek

페이지 정보

본문

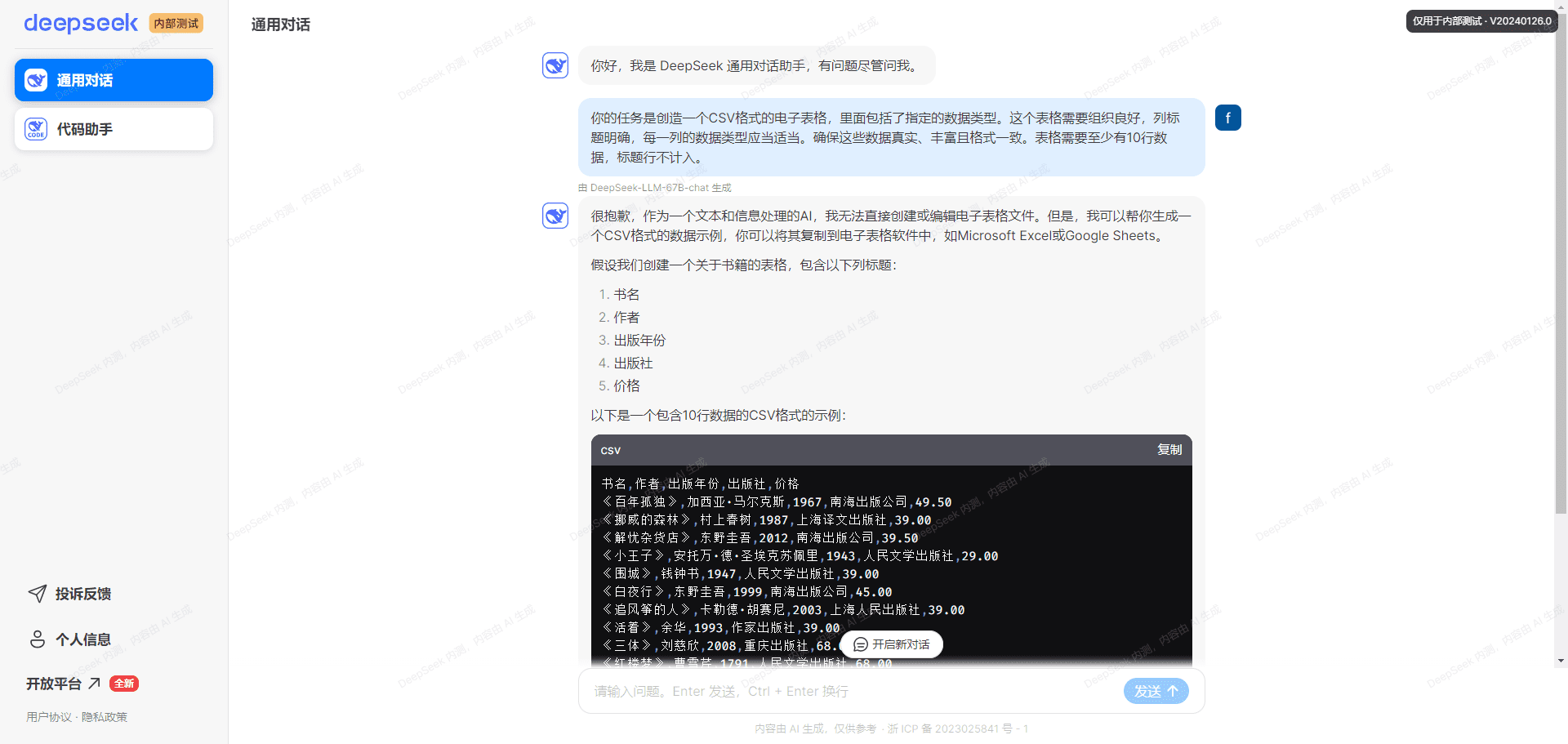

Many of the techniques DeepSeek describes of their paper are issues that our OLMo staff at Ai2 would benefit from getting access to and is taking direct inspiration from. This information assumes legal access and institutional oversight. Flexing on how a lot compute you will have entry to is common apply among AI corporations. This is way less than Meta, nevertheless it continues to be one of the organizations in the world with probably the most entry to compute. The worth of progress in AI is way closer to this, no less than until substantial enhancements are made to the open variations of infrastructure (code and data7). For Chinese firms that are feeling the stress of substantial chip export controls, it can't be seen as particularly surprising to have the angle be "Wow we are able to do way more than you with less." I’d probably do the same of their sneakers, it is far more motivating than "my cluster is greater than yours." This goes to say that we'd like to know how vital the narrative of compute numbers is to their reporting. The success here is that they’re related among American expertise companies spending what's approaching or surpassing $10B per 12 months on AI fashions.

By 2022, the Chinese ministry of education had accredited 440 universities to offer undergraduate degrees specializing in AI, based on a report from the center for Security and Emerging Technology (CSET) at Georgetown University in Washington DC. Lower bounds for compute are important to understanding the progress of expertise and peak efficiency, however with out substantial compute headroom to experiment on massive-scale models DeepSeek-V3 would by no means have existed. During the pre-coaching state, training DeepSeek-V3 on every trillion tokens requires solely 180K H800 GPU hours, i.e., 3.7 days on our own cluster with 2048 H800 GPUs. For reference, the Nvidia H800 is a "nerfed" version of the H100 chip. Nvidia rapidly made new versions of their A100 and H100 GPUs that are effectively simply as succesful named the A800 and H800. Custom multi-GPU communication protocols to make up for the slower communication pace of the H800 and optimize pretraining throughput. While NVLink pace are cut to 400GB/s, that's not restrictive for many parallelism methods which might be employed resembling 8x Tensor Parallel, Fully Sharded Data Parallel, and Pipeline Parallelism.

By 2022, the Chinese ministry of education had accredited 440 universities to offer undergraduate degrees specializing in AI, based on a report from the center for Security and Emerging Technology (CSET) at Georgetown University in Washington DC. Lower bounds for compute are important to understanding the progress of expertise and peak efficiency, however with out substantial compute headroom to experiment on massive-scale models DeepSeek-V3 would by no means have existed. During the pre-coaching state, training DeepSeek-V3 on every trillion tokens requires solely 180K H800 GPU hours, i.e., 3.7 days on our own cluster with 2048 H800 GPUs. For reference, the Nvidia H800 is a "nerfed" version of the H100 chip. Nvidia rapidly made new versions of their A100 and H100 GPUs that are effectively simply as succesful named the A800 and H800. Custom multi-GPU communication protocols to make up for the slower communication pace of the H800 and optimize pretraining throughput. While NVLink pace are cut to 400GB/s, that's not restrictive for many parallelism methods which might be employed resembling 8x Tensor Parallel, Fully Sharded Data Parallel, and Pipeline Parallelism.

Among the many universal and loud reward, there has been some skepticism on how much of this report is all novel breakthroughs, a la "did DeepSeek actually need Pipeline Parallelism" or "HPC has been doing one of these compute optimization eternally (or also in TPU land)". First, we have to contextualize the GPU hours themselves. The prices to practice models will proceed to fall with open weight fashions, especially when accompanied by detailed technical stories, but the tempo of diffusion is bottlenecked by the need for challenging reverse engineering / reproduction efforts. The coaching of DeepSeek-V3 is value-efficient due to the support of FP8 training and meticulous engineering optimizations. Furthermore, DeepSeek-V3 pioneers an auxiliary-loss-free Deep seek strategy for load balancing and units a multi-token prediction coaching objective for stronger performance. We’ll get into the precise numbers beneath, but the question is, which of the many technical innovations listed in the DeepSeek V3 report contributed most to its learning effectivity - i.e. model efficiency relative to compute used. Multi-head latent consideration (MLA)2 to minimize the memory utilization of consideration operators while sustaining modeling performance.

A second level to think about is why DeepSeek is training on only 2048 GPUs whereas Meta highlights coaching their model on a larger than 16K GPU cluster. This is likely DeepSeek Ai Chat’s only pretraining cluster and they've many different GPUs which can be either not geographically co-located or lack chip-ban-restricted communication gear making the throughput of other GPUs lower. Quickly provides subtitles to movies, making content extra accessible to a wider viewers, improving engagement, and enhancing viewer expertise. The model is optimized for each massive-scale inference and small-batch local deployment, enhancing its versatility. Overall, one of the best local fashions and hosted models are pretty good at Solidity code completion, and not all fashions are created equal. This submit revisits the technical details of DeepSeek V3, but focuses on how finest to view the cost of coaching models at the frontier of AI and how these prices may be altering. It works greatest with commonly used AI writing instruments.

If you loved this article therefore you would like to receive more info pertaining to Deep seek nicely visit our own website.

- 이전글Reap the Benefits Of Vape Shops - Read These 4 Tips 25.02.18

- 다음글Why You Should Focus On Improving Pragmatic Slots Free Trial 25.02.18

댓글목록

등록된 댓글이 없습니다.