Important Deepseek Smartphone Apps

페이지 정보

본문

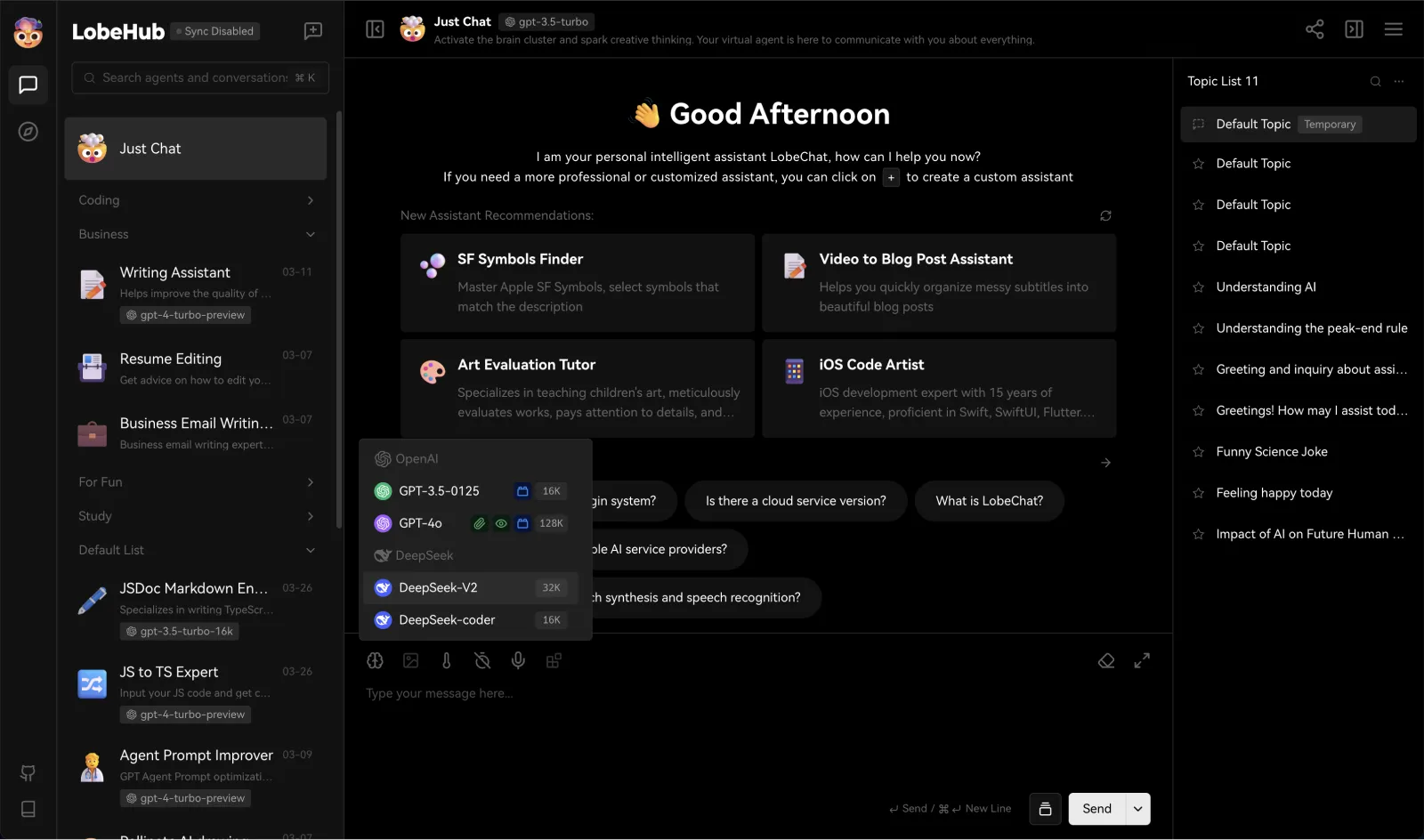

This post revisits the technical particulars of DeepSeek V3, but focuses on how best to view the fee of training fashions at the frontier of AI and how these prices may be altering. The $5M figure for the final training run should not be your basis for how much frontier AI models cost. DeepSeek-V3 demonstrates competitive performance, standing on par with top-tier models corresponding to LLaMA-3.1-405B, GPT-4o, and Claude-Sonnet 3.5, while significantly outperforming Qwen2.5 72B. Moreover, DeepSeek-V3 excels in MMLU-Pro, a extra challenging educational data benchmark, where it closely trails Claude-Sonnet 3.5. On MMLU-Redux, a refined model of MMLU with corrected labels, Deepseek free-V3 surpasses its peers. SAL excels at answering simple questions about code and producing relatively simple code. As such, it’s adept at generating boilerplate code, nevertheless it shortly will get into the problems described above at any time when business logic is introduced. The aforementioned CoT strategy could be seen as inference-time scaling because it makes inference dearer by generating more output tokens. For Chinese corporations which can be feeling the stress of substantial chip export controls, it can't be seen as significantly surprising to have the angle be "Wow we are able to do way more than you with less." I’d in all probability do the identical of their footwear, it is way more motivating than "my cluster is greater than yours." This goes to say that we want to know how important the narrative of compute numbers is to their reporting.

This post revisits the technical particulars of DeepSeek V3, but focuses on how best to view the fee of training fashions at the frontier of AI and how these prices may be altering. The $5M figure for the final training run should not be your basis for how much frontier AI models cost. DeepSeek-V3 demonstrates competitive performance, standing on par with top-tier models corresponding to LLaMA-3.1-405B, GPT-4o, and Claude-Sonnet 3.5, while significantly outperforming Qwen2.5 72B. Moreover, DeepSeek-V3 excels in MMLU-Pro, a extra challenging educational data benchmark, where it closely trails Claude-Sonnet 3.5. On MMLU-Redux, a refined model of MMLU with corrected labels, Deepseek free-V3 surpasses its peers. SAL excels at answering simple questions about code and producing relatively simple code. As such, it’s adept at generating boilerplate code, nevertheless it shortly will get into the problems described above at any time when business logic is introduced. The aforementioned CoT strategy could be seen as inference-time scaling because it makes inference dearer by generating more output tokens. For Chinese corporations which can be feeling the stress of substantial chip export controls, it can't be seen as significantly surprising to have the angle be "Wow we are able to do way more than you with less." I’d in all probability do the identical of their footwear, it is way more motivating than "my cluster is greater than yours." This goes to say that we want to know how important the narrative of compute numbers is to their reporting.

The joys of seeing your first line of code come to life - it's a feeling every aspiring developer knows! However, the alleged training effectivity appears to have come more from the appliance of good model engineering practices greater than it has from elementary advances in AI know-how. We’ll get into the precise numbers beneath, however the question is, which of the many technical improvements listed within the DeepSeek V3 report contributed most to its learning efficiency - i.e. mannequin efficiency relative to compute used. It virtually feels like the character or post-training of the mannequin being shallow makes it really feel like the model has more to offer than it delivers. In all of those, DeepSeek V3 feels very capable, but the way it presents its data doesn’t feel precisely in line with my expectations from one thing like Claude or ChatGPT. Claude didn't fairly get it in one shot - I needed to feed it the URL to a more recent Pyodide and it acquired stuck in a bug loop which I fixed by pasting the code right into a contemporary session. It’s a really succesful mannequin, however not one which sparks as much joy when using it like Claude or with tremendous polished apps like ChatGPT, so I don’t count on to maintain using it long term.

The joys of seeing your first line of code come to life - it's a feeling every aspiring developer knows! However, the alleged training effectivity appears to have come more from the appliance of good model engineering practices greater than it has from elementary advances in AI know-how. We’ll get into the precise numbers beneath, however the question is, which of the many technical improvements listed within the DeepSeek V3 report contributed most to its learning efficiency - i.e. mannequin efficiency relative to compute used. It virtually feels like the character or post-training of the mannequin being shallow makes it really feel like the model has more to offer than it delivers. In all of those, DeepSeek V3 feels very capable, but the way it presents its data doesn’t feel precisely in line with my expectations from one thing like Claude or ChatGPT. Claude didn't fairly get it in one shot - I needed to feed it the URL to a more recent Pyodide and it acquired stuck in a bug loop which I fixed by pasting the code right into a contemporary session. It’s a really succesful mannequin, however not one which sparks as much joy when using it like Claude or with tremendous polished apps like ChatGPT, so I don’t count on to maintain using it long term.

In the example under, one of many coefficients (a0) is declared but never really used in the calculation. AI can even struggle with variable sorts when these variables have predetermined sizes. SVH already contains a large choice of constructed-in templates that seamlessly combine into the modifying process, ensuring correctness and allowing for swift customization of variable names whereas writing HDL code. While genAI models for HDL still undergo from many issues, SVH’s validation features significantly reduce the dangers of utilizing such generated code, making certain larger quality and reliability. SVH and HDL era instruments work harmoniously, compensating for every other’s limitations. These points highlight the restrictions of AI models when pushed past their consolation zones. I significantly believe that small language models need to be pushed more. Even worse, 75% of all evaluated models couldn't even reach 50% compiling responses. The option to interpret each discussions must be grounded in the truth that the DeepSeek V3 mannequin is extremely good on a per-FLOP comparability to peer models (probably even some closed API fashions, more on this below). All bells and whistles aside, the deliverable that issues is how good the fashions are relative to FLOPs spent.

Llama three 405B used 30.8M GPU hours for training relative to DeepSeek V3’s 2.6M GPU hours (extra info within the Llama three model card). At first glance, based on common benchmarks, DeepSeek R1 seems to perform similarly to OpenAI’s reasoning mannequin o1. The truth that the model of this high quality is distilled from DeepSeek’s reasoning mannequin collection, R1, makes me extra optimistic about the reasoning model being the true deal. The assistant first thinks about the reasoning process in the thoughts after which provides the consumer with the reply. The move follows comparable restrictions in Europe, Australia, and elements of Asia, as Western governments question the safety implications of allowing a Chinese AI mannequin to collect and course of consumer information. It’s their latest mixture of consultants (MoE) mannequin trained on 14.8T tokens with 671B total and 37B energetic parameters. Since release, we’ve additionally gotten confirmation of the ChatBotArena rating that places them in the top 10 and over the likes of recent Gemini pro fashions, Grok 2, o1-mini, and many others. With solely 37B lively parameters, this is extraordinarily appealing for a lot of enterprise functions.

If you liked this write-up and you would like to get more details relating to Free DeepSeek online kindly visit our own page.

- 이전글Assured No Stress Deepseek Ai News 25.02.22

- 다음글Poll: How A lot Do You Earn From شيشة الكترونية? 25.02.22

댓글목록

등록된 댓글이 없습니다.