DeepSeek aI is Disrupting the Tech Industry-What it Means For Legal Pr…

페이지 정보

본문

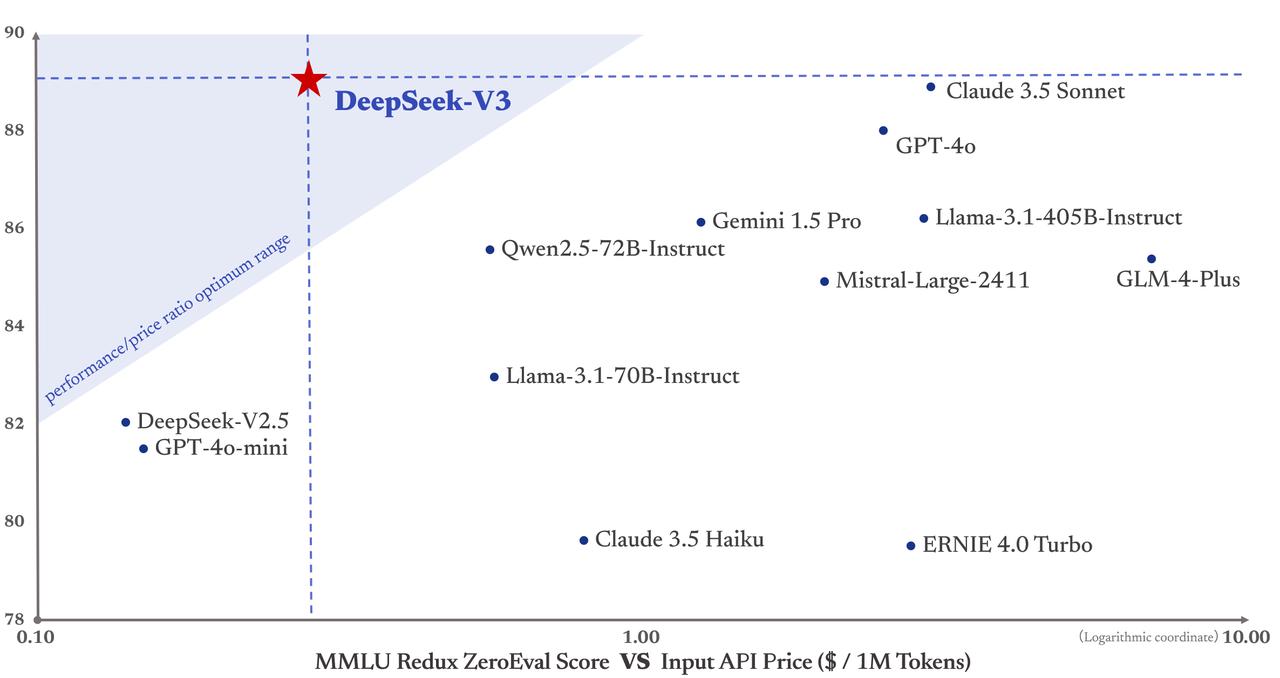

DeepSeek is shaking up the AI business with price-efficient large-language fashions it claims can perform just as well as rivals from giants like OpenAI and Meta. DeepSeek’s claims of building its impressive chatbot on a price range drew curiosity that helped make its AI assistant the No. 1 downloaded free app on Apple’s iPhone this week, forward of U.S.-made chatbots ChatGPT and Google’s Gemini. Moreover, DeepSeek’s open-source approach enhances transparency and accountability in AI improvement. DeepSeek gives a revolutionary method to content material creation, enabling writers and marketers to produce high-quality content material in less time and with higher ease. In comparison with GPTQ, it gives faster Transformers-primarily based inference with equivalent or higher quality compared to the mostly used GPTQ settings. 2. After install. Open your device’s Settings. Cost Savings: Both DeepSeek R1 and Browser Use are utterly free and open supply, eliminating subscription charges. Under this configuration, DeepSeek-V3 includes 671B complete parameters, of which 37B are activated for each token. However, this trick could introduce the token boundary bias (Lundberg, 2023) when the mannequin processes multi-line prompts without terminal line breaks, particularly for few-shot evaluation prompts. SEOs often struggle with technical issues - like crawl anomalies, parameter handling, or data clean-up - and may find DeepSeek a more reliable companion for these tasks.

DeepSeek is shaking up the AI business with price-efficient large-language fashions it claims can perform just as well as rivals from giants like OpenAI and Meta. DeepSeek’s claims of building its impressive chatbot on a price range drew curiosity that helped make its AI assistant the No. 1 downloaded free app on Apple’s iPhone this week, forward of U.S.-made chatbots ChatGPT and Google’s Gemini. Moreover, DeepSeek’s open-source approach enhances transparency and accountability in AI improvement. DeepSeek gives a revolutionary method to content material creation, enabling writers and marketers to produce high-quality content material in less time and with higher ease. In comparison with GPTQ, it gives faster Transformers-primarily based inference with equivalent or higher quality compared to the mostly used GPTQ settings. 2. After install. Open your device’s Settings. Cost Savings: Both DeepSeek R1 and Browser Use are utterly free and open supply, eliminating subscription charges. Under this configuration, DeepSeek-V3 includes 671B complete parameters, of which 37B are activated for each token. However, this trick could introduce the token boundary bias (Lundberg, 2023) when the mannequin processes multi-line prompts without terminal line breaks, particularly for few-shot evaluation prompts. SEOs often struggle with technical issues - like crawl anomalies, parameter handling, or data clean-up - and may find DeepSeek a more reliable companion for these tasks.

So, many could have believed it would be tough for China to create a excessive-quality AI that rivalled corporations like OpenAI. Conversely, OpenAI CEO Sam Altman welcomed DeepSeek to the AI race, stating "r1 is an impressive model, significantly round what they’re capable of deliver for the price," in a recent submit on X. "We will clearly deliver significantly better fashions and in addition it’s legit invigorating to have a brand new competitor! 36Kr: Many imagine that for startups, entering the sector after main firms have established a consensus is no longer a good timing. The present architecture makes it cumbersome to fuse matrix transposition with GEMM operations. Support for Transposed GEMM Operations. The present implementations wrestle to effectively assist on-line quantization, despite its effectiveness demonstrated in our research. However, the current communication implementation depends on costly SMs (e.g., we allocate 20 out of the 132 SMs obtainable within the H800 GPU for this purpose), which can limit the computational throughput. However, on the H800 structure, it is typical for two WGMMA to persist concurrently: whereas one warpgroup performs the promotion operation, the other is able to execute the MMA operation.

As illustrated in Figure 6, the Wgrad operation is performed in FP8. All-to-all communication of the dispatch and mix parts is carried out via direct level-to-point transfers over IB to realize low latency. With this unified interface, computation items can simply accomplish operations similar to read, write, multicast, and cut back across the entire IB-NVLink-unified area by way of submitting communication requests based on easy primitives. This considerably reduces the dependency on communication bandwidth compared to serial computation and communication. Communication bandwidth is a critical bottleneck within the training of MoE fashions. Additionally, we leverage the IBGDA (NVIDIA, 2022) technology to further decrease latency and enhance communication efficiency. Bai et al. (2022) Y. Bai, S. Kadavath, S. Kundu, A. Askell, J. Kernion, A. Jones, A. Chen, A. Goldie, A. Mirhoseini, C. McKinnon, et al. Each MoE layer consists of 1 shared skilled and 256 routed experts, where the intermediate hidden dimension of each knowledgeable is 2048. Among the many routed specialists, eight consultants shall be activated for each token, and each token will be ensured to be despatched to at most 4 nodes. As talked about earlier than, our advantageous-grained quantization applies per-group scaling factors alongside the inside dimension K. These scaling elements can be efficiently multiplied on the CUDA Cores as the dequantization course of with minimal extra computational value.

To deal with this inefficiency, we recommend that future chips combine FP8 cast and TMA (Tensor Memory Accelerator) entry into a single fused operation, so quantization will be completed during the switch of activations from global reminiscence to shared reminiscence, avoiding frequent reminiscence reads and writes. Therefore, we advocate future chips to help effective-grained quantization by enabling Tensor Cores to obtain scaling elements and implement MMA with group scaling. Thus, we advocate that future chip designs enhance accumulation precision in Tensor Cores to assist full-precision accumulation, or select an applicable accumulation bit-width in response to the accuracy requirements of coaching and inference algorithms. The attention part employs 4-approach Tensor Parallelism (TP4) with Sequence Parallelism (SP), combined with 8-way Data Parallelism (DP8).

To deal with this inefficiency, we recommend that future chips combine FP8 cast and TMA (Tensor Memory Accelerator) entry into a single fused operation, so quantization will be completed during the switch of activations from global reminiscence to shared reminiscence, avoiding frequent reminiscence reads and writes. Therefore, we advocate future chips to help effective-grained quantization by enabling Tensor Cores to obtain scaling elements and implement MMA with group scaling. Thus, we advocate that future chip designs enhance accumulation precision in Tensor Cores to assist full-precision accumulation, or select an applicable accumulation bit-width in response to the accuracy requirements of coaching and inference algorithms. The attention part employs 4-approach Tensor Parallelism (TP4) with Sequence Parallelism (SP), combined with 8-way Data Parallelism (DP8).

- 이전글A hundred and one Ideas For Deepseek Ai 25.02.24

- 다음글Five Buy Eu Driving License Projects For Any Budget 25.02.24

댓글목록

등록된 댓글이 없습니다.