Read These Eight Tips on Deepseek To Double Your Small Business

페이지 정보

본문

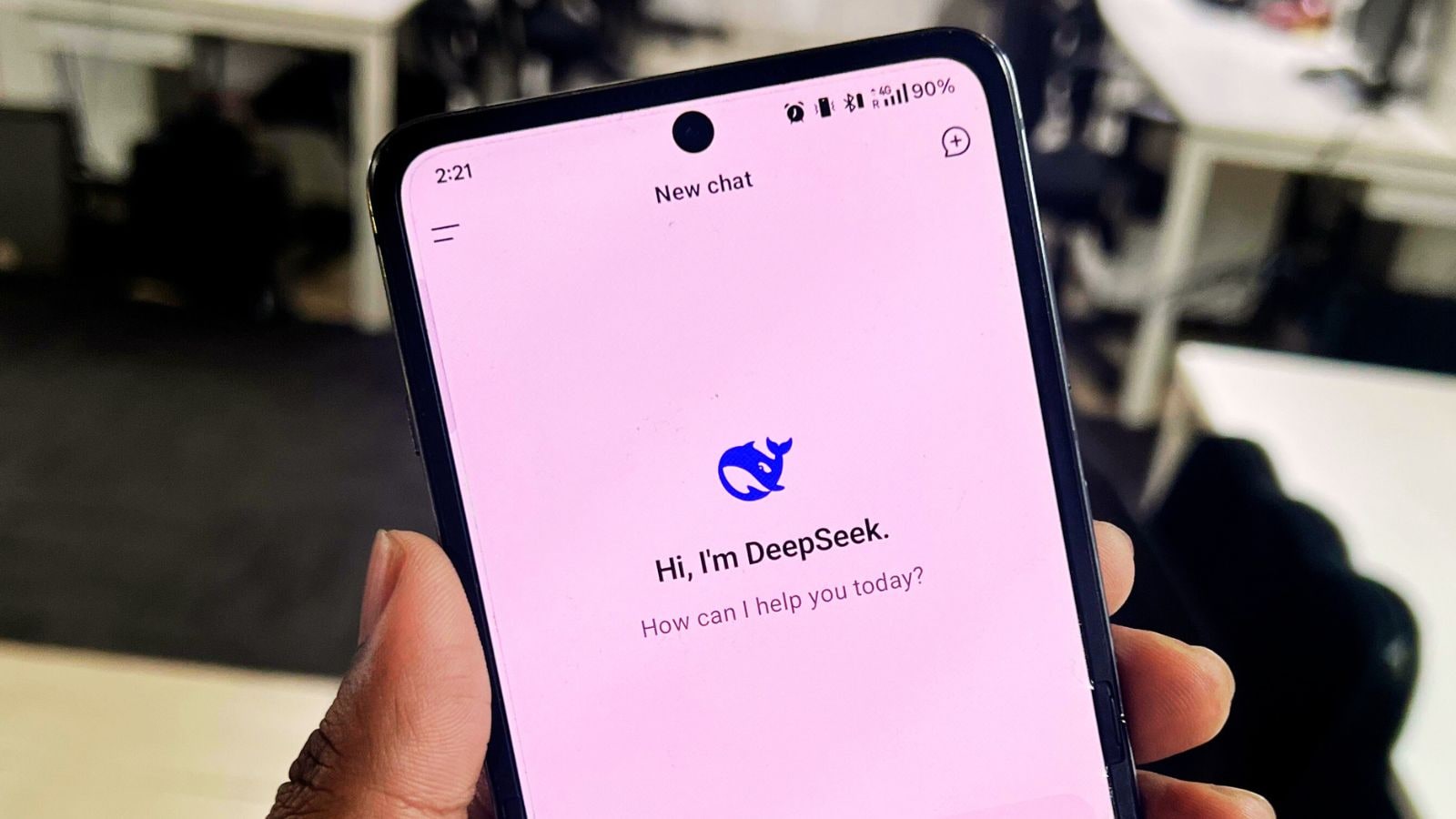

DeepSeek is shaking up the AI trade with value-environment friendly massive language fashions it claims can perform simply in addition to rivals from giants like OpenAI and Meta. Sam Altman, CEO of OpenAI, last year mentioned the AI trade would need trillions of dollars in investment to assist the event of in-demand chips needed to energy the electricity-hungry information centers that run the sector’s complex models. If you need data for every job, the definition of normal will not be the same. Humans, together with prime gamers, need lots of practice and coaching to develop into good at chess. The impact of DeepSeek spans various industries including healthcare, finance, schooling, and advertising. Based in Hangzhou, Zhejiang, DeepSeek is owned and funded by the Chinese hedge fund High-Flyer co-founder Liang Wenfeng, who also serves as its CEO. The company’s origins are within the financial sector, emerging from High-Flyer, a Chinese hedge fund additionally co-founded by Liang Wenfeng. DeepSeek is a Chinese artificial intelligence startup that operates underneath High-Flyer, a quantitative hedge fund based in Hangzhou, China. Despite its popularity with worldwide users, the app seems to censor answers to sensitive questions about China and its government.

DeepSeek is shaking up the AI trade with value-environment friendly massive language fashions it claims can perform simply in addition to rivals from giants like OpenAI and Meta. Sam Altman, CEO of OpenAI, last year mentioned the AI trade would need trillions of dollars in investment to assist the event of in-demand chips needed to energy the electricity-hungry information centers that run the sector’s complex models. If you need data for every job, the definition of normal will not be the same. Humans, together with prime gamers, need lots of practice and coaching to develop into good at chess. The impact of DeepSeek spans various industries including healthcare, finance, schooling, and advertising. Based in Hangzhou, Zhejiang, DeepSeek is owned and funded by the Chinese hedge fund High-Flyer co-founder Liang Wenfeng, who also serves as its CEO. The company’s origins are within the financial sector, emerging from High-Flyer, a Chinese hedge fund additionally co-founded by Liang Wenfeng. DeepSeek is a Chinese artificial intelligence startup that operates underneath High-Flyer, a quantitative hedge fund based in Hangzhou, China. Despite its popularity with worldwide users, the app seems to censor answers to sensitive questions about China and its government.

In this article, I define "reasoning" as the technique of answering questions that require complicated, multi-step era with intermediate steps. This means we refine LLMs to excel at complicated tasks which can be greatest solved with intermediate steps, equivalent to puzzles, superior math, and coding challenges. In this text, I will describe the 4 foremost approaches to constructing reasoning fashions, or how we will improve LLMs with reasoning capabilities. From the table, we will observe that the MTP technique persistently enhances the model performance on most of the evaluation benchmarks. The analysis outcomes exhibit that the distilled smaller dense fashions carry out exceptionally effectively on benchmarks. Whether you’re a scholar, researcher, or business proprietor, DeepSeek delivers faster, smarter, and more precise results. It’s like a teacher transferring their knowledge to a scholar, permitting the student to carry out duties with similar proficiency but with less experience or assets. If it’s not "worse", it's at the least not better than GPT-2 in chess. It’s optimized for each small duties and enterprise-stage demands. It is feasible. I have tried to incorporate some PGN headers in the immediate (in the identical vein as earlier studies), but without tangible success.

In this article, I define "reasoning" as the technique of answering questions that require complicated, multi-step era with intermediate steps. This means we refine LLMs to excel at complicated tasks which can be greatest solved with intermediate steps, equivalent to puzzles, superior math, and coding challenges. In this text, I will describe the 4 foremost approaches to constructing reasoning fashions, or how we will improve LLMs with reasoning capabilities. From the table, we will observe that the MTP technique persistently enhances the model performance on most of the evaluation benchmarks. The analysis outcomes exhibit that the distilled smaller dense fashions carry out exceptionally effectively on benchmarks. Whether you’re a scholar, researcher, or business proprietor, DeepSeek delivers faster, smarter, and more precise results. It’s like a teacher transferring their knowledge to a scholar, permitting the student to carry out duties with similar proficiency but with less experience or assets. If it’s not "worse", it's at the least not better than GPT-2 in chess. It’s optimized for each small duties and enterprise-stage demands. It is feasible. I have tried to incorporate some PGN headers in the immediate (in the identical vein as earlier studies), but without tangible success.

A primary speculation is that I didn’t immediate DeepSeek-R1 correctly. The Prompt Report paper - a survey of prompting papers (podcast). Frankly, I don’t assume it is the principle motive. It will also be the case that the chat mannequin just isn't as robust as a completion model, however I don’t suppose it is the primary motive. If you are an everyday user and wish to make use of DeepSeek Chat as an alternative to ChatGPT or different AI models, you may be in a position to make use of it without cost if it is out there by way of a platform that provides free entry (such because the official DeepSeek website or third-party functions). Are we in a regression? DeepSeek-R1: Is it a regression? When AGI turns into a reality, the potential for society to leverage this technology and to enhance and increase will probably be at an all-time high. Eventually, someone will outline it formally in a paper, only for it to be redefined in the next, and so forth.

Because remodeling an LLM right into a reasoning mannequin also introduces sure drawbacks, which I will focus on later. LLM refers back to the technology underpinning generative AI providers resembling ChatGPT. By tapping into the DeepSeek r1 AI bot, you’ll witness how reducing-edge know-how can reshape productivity. Interestingly, the "truth" in chess can either be found (e.g., by means of intensive self-play), taught (e.g., through books, coaches, etc.), or extracted trough an exterior engine (e.g., Stockfish). As a facet observe, I discovered that chess is a tough job to excel at without specific training and knowledge. So that you flip the data into all sorts of question and answer formats, graphs, tables, photos, god forbid podcasts, mix with different sources and augment them, you'll be able to create a formidable dataset with this, and not just for pretraining however across the training spectrum, particularly with a frontier model or inference time scaling (utilizing the prevailing fashions to assume for longer and producing higher data). Compressor summary: Powerformer is a novel transformer architecture that learns robust power system state representations by utilizing a section-adaptive consideration mechanism and customised strategies, achieving better power dispatch for different transmission sections. GPT-2 was a bit more consistent and played better moves.

- 이전글Does This 25.02.28

- 다음글رول ابز وايلد بيري 25.02.28

댓글목록

등록된 댓글이 없습니다.