Methods to Become Better With Deepseek Chatgpt In 10 Minutes

페이지 정보

본문

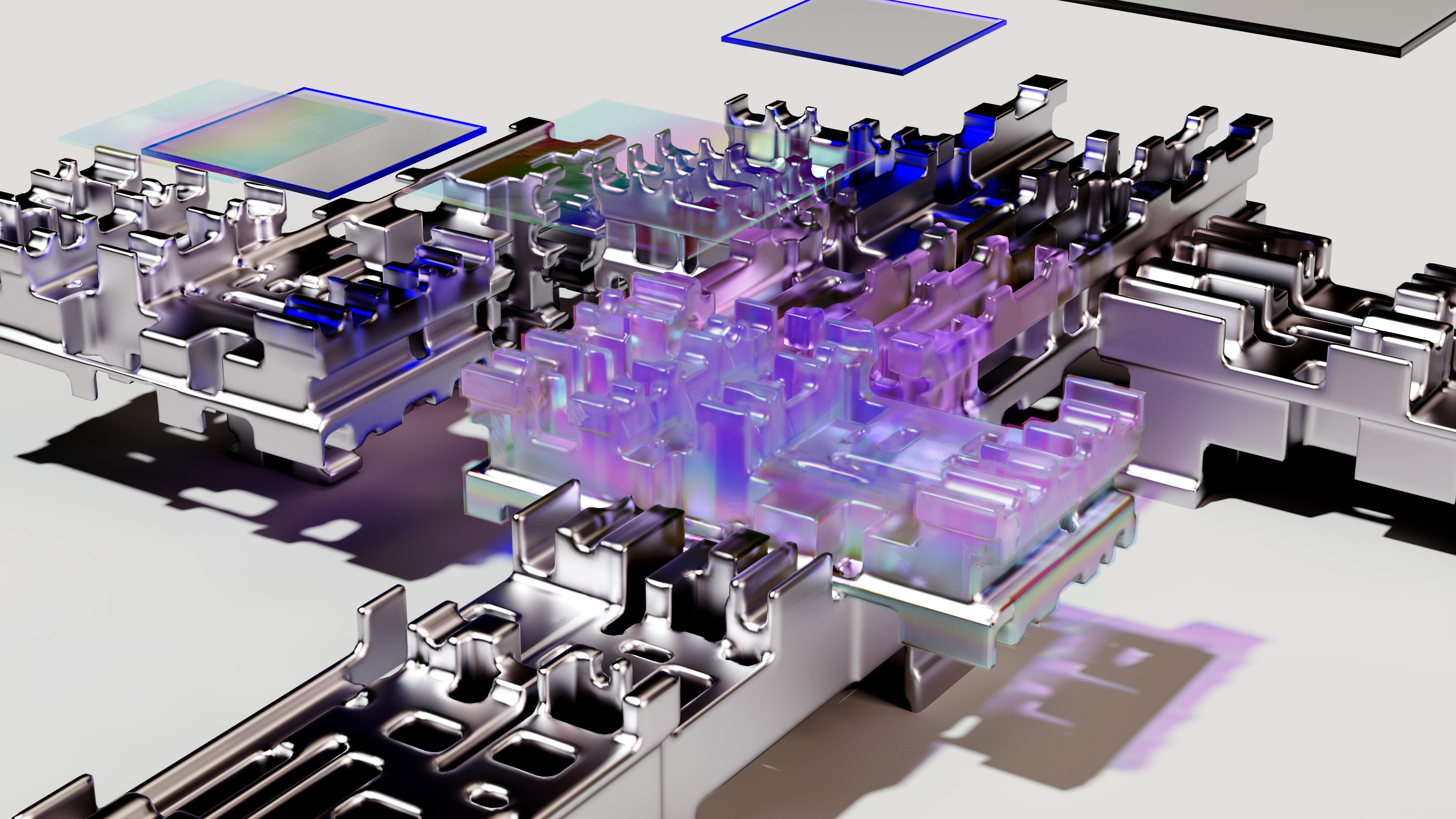

As illustrated in Figure 7 (a), (1) for activations, we group and scale elements on a 1x128 tile basis (i.e., per token per 128 channels); and (2) for weights, we group and scale parts on a 128x128 block basis (i.e., per 128 input channels per 128 output channels). As illustrated in Figure 6, the Wgrad operation is carried out in FP8. Inspired by latest advances in low-precision coaching (Peng et al., 2023b; Dettmers et al., 2022; Noune et al., 2022), we propose a wonderful-grained combined precision framework utilizing the FP8 knowledge format for training DeepSeek r1-V3. To reduce the reminiscence consumption, it's a pure selection to cache activations in FP8 format for the backward cross of the Linear operator. Moreover, to additional cut back memory and communication overhead in MoE training, we cache and dispatch activations in FP8, whereas storing low-precision optimizer states in BF16. Based on our combined precision FP8 framework, we introduce a number of methods to reinforce low-precision training accuracy, focusing on both the quantization technique and the multiplication process. Firstly, in an effort to accelerate model training, the vast majority of core computation kernels, i.e., GEMM operations, are applied in FP8 precision.

As illustrated in Figure 7 (a), (1) for activations, we group and scale elements on a 1x128 tile basis (i.e., per token per 128 channels); and (2) for weights, we group and scale parts on a 128x128 block basis (i.e., per 128 input channels per 128 output channels). As illustrated in Figure 6, the Wgrad operation is carried out in FP8. Inspired by latest advances in low-precision coaching (Peng et al., 2023b; Dettmers et al., 2022; Noune et al., 2022), we propose a wonderful-grained combined precision framework utilizing the FP8 knowledge format for training DeepSeek r1-V3. To reduce the reminiscence consumption, it's a pure selection to cache activations in FP8 format for the backward cross of the Linear operator. Moreover, to additional cut back memory and communication overhead in MoE training, we cache and dispatch activations in FP8, whereas storing low-precision optimizer states in BF16. Based on our combined precision FP8 framework, we introduce a number of methods to reinforce low-precision training accuracy, focusing on both the quantization technique and the multiplication process. Firstly, in an effort to accelerate model training, the vast majority of core computation kernels, i.e., GEMM operations, are applied in FP8 precision.

This design permits overlapping of the two operations, maintaining high utilization of Tensor Cores. It took main Chinese tech agency Baidu simply 4 months after the release of ChatGPT-3 to launch its first LLM, Ernie Bot, in March 2023. In a little bit more than two years since the release of ChatGPT-3, China has developed a minimum of 240 LLMs, in accordance to one Chinese LLM researcher’s information at Github. All 4 proceed to spend money on AI models in the present day and the program has grown to at least 15 companies. Deepseek Online chat online-R1 - the AI mannequin created by DeepSeek, somewhat recognized Chinese company, at a fraction of what it price OpenAI to construct its own models - has sent the AI business into a frenzy for the last couple of days. While its v3 and r1 fashions are undoubtedly impressive, they're constructed on top of improvements developed by US AI labs. In low-precision training frameworks, overflows and DeepSeek underflows are frequent challenges because of the restricted dynamic range of the FP8 format, which is constrained by its lowered exponent bits. Building upon widely adopted methods in low-precision coaching (Kalamkar et al., 2019; Narang et al., 2017), we suggest a blended precision framework for FP8 coaching.

This design permits overlapping of the two operations, maintaining high utilization of Tensor Cores. It took main Chinese tech agency Baidu simply 4 months after the release of ChatGPT-3 to launch its first LLM, Ernie Bot, in March 2023. In a little bit more than two years since the release of ChatGPT-3, China has developed a minimum of 240 LLMs, in accordance to one Chinese LLM researcher’s information at Github. All 4 proceed to spend money on AI models in the present day and the program has grown to at least 15 companies. Deepseek Online chat online-R1 - the AI mannequin created by DeepSeek, somewhat recognized Chinese company, at a fraction of what it price OpenAI to construct its own models - has sent the AI business into a frenzy for the last couple of days. While its v3 and r1 fashions are undoubtedly impressive, they're constructed on top of improvements developed by US AI labs. In low-precision training frameworks, overflows and DeepSeek underflows are frequent challenges because of the restricted dynamic range of the FP8 format, which is constrained by its lowered exponent bits. Building upon widely adopted methods in low-precision coaching (Kalamkar et al., 2019; Narang et al., 2017), we suggest a blended precision framework for FP8 coaching.

As a standard practice, the enter distribution is aligned to the representable range of the FP8 format by scaling the maximum absolute worth of the input tensor to the utmost representable worth of FP8 (Narang et al., 2017). This methodology makes low-precision coaching highly sensitive to activation outliers, which might heavily degrade quantization accuracy. In distinction to the hybrid FP8 format adopted by prior work (NVIDIA, 2024b; Peng et al., 2023b; Sun et al., 2019b), which makes use of E4M3 (4-bit exponent and 3-bit mantissa) in Fprop and E5M2 (5-bit exponent and 2-bit mantissa) in Dgrad and Wgrad, we undertake the E4M3 format on all tensors for increased precision. Low-precision GEMM operations often undergo from underflow points, and their accuracy largely depends on excessive-precision accumulation, which is often carried out in an FP32 precision (Kalamkar et al., 2019; Narang et al., 2017). However, we observe that the accumulation precision of FP8 GEMM on NVIDIA H800 GPUs is restricted to retaining around 14 bits, which is considerably decrease than FP32 accumulation precision. Despite the efficiency benefit of the FP8 format, sure operators nonetheless require the next precision attributable to their sensitivity to low-precision computations. Besides, some low-price operators can even utilize a better precision with a negligible overhead to the overall training value.

However, combined with our exact FP32 accumulation technique, it may be effectively applied. We attribute the feasibility of this strategy to our high-quality-grained quantization strategy, i.e., tile and block-sensible scaling. So as to make sure correct scales and simplify the framework, we calculate the utmost absolute value on-line for each 1x128 activation tile or 128x128 weight block. As depicted in Figure 6, all three GEMMs associated with the Linear operator, namely Fprop (ahead pass), Dgrad (activation backward pass), and Wgrad (weight backward move), are executed in FP8. Ultimately, the US can't be governed by Executive Orders - as the Trump crowd are already discovering. Investors and governments, including Japan’s digital minister Masaaki Taira, are taking note. The government’s push for open supply in the early 2000s - together with the creation of a number of OS software alliances and a locally developed "Red Flag Linux" 中科红旗 - was a approach to restrict the affect of Microsoft Windows working programs.

If you loved this article and you would want to receive details regarding DeepSeek Chat i implore you to visit our webpage.

- 이전글تعرفي على أهم 50 مدرب، ومدربة لياقة بدنية في 2025 25.02.28

- 다음글8 Best Tweets Of All Time About Deepseek 25.02.28

댓글목록

등록된 댓글이 없습니다.