Will Need to Have List Of Deepseek China Ai Networks

페이지 정보

본문

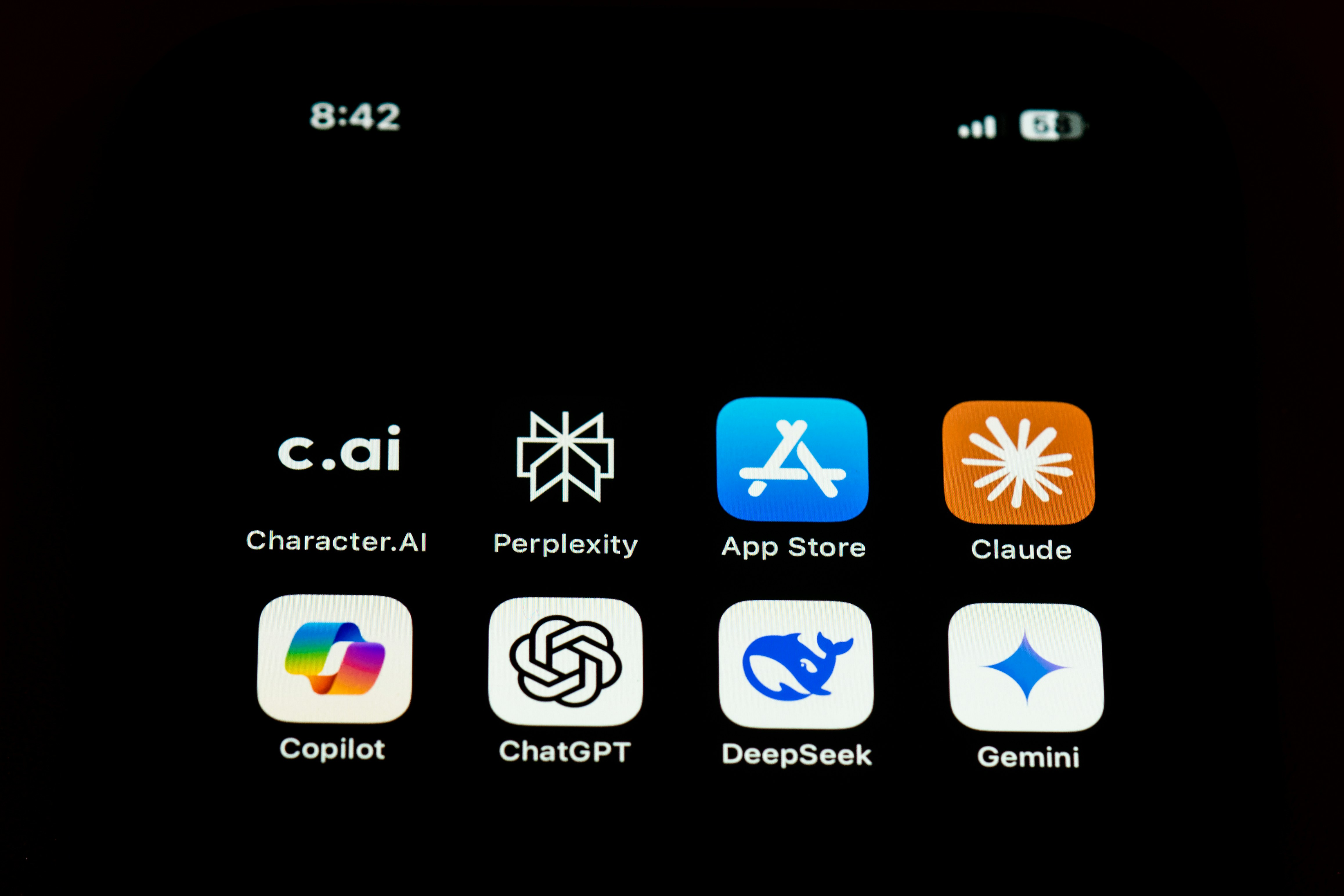

Distillation obviously violates the phrases of service of varied models, however the one solution to stop it's to truly reduce off access, by way of IP banning, rate limiting, and many others. It’s assumed to be widespread by way of mannequin coaching, and is why there are an ever-rising number of models converging on GPT-4o quality. Distillation is less complicated for a company to do by itself fashions, because they've full entry, however you may nonetheless do distillation in a considerably extra unwieldy manner via API, or even, for those who get creative, through chat shoppers. Zuckerberg noted that "there’s a lot of novel issues they did we’re still digesting" and that Meta plans to implement DeepSeek’s "advancements" into Llama. Codellama is a mannequin made for generating and discussing code, the mannequin has been built on prime of Llama2 by Meta. Generative Power: GPT is unparalleled in producing coherent and contextually related textual content. PPTAgent: Generating and Evaluating Presentations Beyond Text-to-Slides. OpenAI advised the Financial Times that it found proof linking DeepSeek to using distillation - a typical approach developers use to train AI fashions by extracting knowledge from bigger, extra capable ones. However, there may be a standard misconception that Deepseek has a video generator or can be utilized for video generation.

DeepSeek engineers had to drop all the way down to PTX, a low-stage instruction set for Nvidia GPUs that is principally like assembly language. Meanwhile, DeepSeek additionally makes their models available for inference: that requires a complete bunch of GPUs above-and-past no matter was used for coaching. Apple Silicon uses unified reminiscence, which implies that the CPU, GPU, and NPU (neural processing unit) have entry to a shared pool of reminiscence; which means Apple’s high-finish hardware really has the best shopper chip for inference (Nvidia gaming GPUs max out at 32GB of VRAM, while Apple’s chips go up to 192 GB of RAM). Usually a launch that good points momentum like this so quickly is celebrated, so why is the market freaking out? My picture is of the long run; at this time is the quick run, and it seems probably the market is working by way of the shock of R1’s existence. This famously ended up working higher than different extra human-guided techniques. Everyone assumed that training leading edge models required more interchip memory bandwidth, however that is strictly what DeepSeek optimized each their model construction and infrastructure round. Dramatically decreased memory requirements for inference make edge inference way more viable, and Apple has the very best hardware for exactly that.

Apple is also a big winner. Another big winner is Amazon: AWS has by-and-giant failed to make their very own quality mannequin, however that doesn’t matter if there are very prime quality open supply models that they'll serve at far decrease prices than anticipated. Meta, meanwhile, is the biggest winner of all. It’s undoubtedly aggressive with OpenAI’s 4o and Anthropic’s Sonnet-3.5, and appears to be better than Llama’s largest mannequin. Despite its reputation with worldwide customers, the app seems to censor answers to sensitive questions about China and its government. DeepSeek made it - not by taking the properly-trodden path of in search of Chinese authorities assist, but by bucking the mold fully. Until a number of weeks in the past, few folks in the Western world had heard of a small Chinese artificial intelligence (AI) firm often known as Deepseek free. But "it could also be very hard" for other AI firms in China to replicate DeepSeek Chat’s profitable organisational construction, which helped it obtain breakthroughs, mentioned Mr Zhu, who can be the founding father of the Centre for Safe AGI, a Shanghai-based non-profit that works with partners in China to plot methods during which artificial common intelligence may be safely deployed. R1 undoes the o1 mythology in a couple of important methods.

If you have any queries relating to where by and how to use deepseek français, you can get hold of us at our web-page.

- 이전글دكتور فيب السعودية - سحبة، مزاج، فيب وشيشة الكترونية 25.03.19

- 다음글Planet Waves :: Moving Mountains 25.03.19

댓글목록

등록된 댓글이 없습니다.