The Untold Story on Deepseek Chatgpt That You will Need to Read or Be …

페이지 정보

본문

A easy strategy is to use block-sensible quantization per 128x128 parts like the way we quantize the mannequin weights. Although our tile-smart fine-grained quantization successfully mitigates the error introduced by function outliers, it requires totally different groupings for activation quantization, i.e., 1x128 in ahead pass and 128x1 for backward go. A similar process can also be required for the activation gradient. But I believe that the thought course of does something comparable for typical customers to what the chat interface did. This incident resulted from a bug within the redis-py open supply library that exposed energetic user’s chat histories to other users in some circumstances, and moreover exposed payment data of roughly 1.2% of ChatGPT Plus service subscribers during a 9-hour window. 2. Platform Lock-In - Works finest with Google providers but lacks flexibility for customers outside the ecosystem. Jianzhi began operations by providing instructional content material merchandise and IT providers to increased schooling establishments. Learn to develop and deploy an intelligent Spring Boot app on Azure Container Apps using PetClinic, Langchain4j, Azure OpenAI, and Cognitive Services with chatbot integration. DeepSeek’s AI chatbot has gained important traction as a consequence of its unique benefits over rivals. Nasdaq futures plummeted almost 4%, with Nvidia alone shedding over 11% of its valuation in pre-market trading.

A easy strategy is to use block-sensible quantization per 128x128 parts like the way we quantize the mannequin weights. Although our tile-smart fine-grained quantization successfully mitigates the error introduced by function outliers, it requires totally different groupings for activation quantization, i.e., 1x128 in ahead pass and 128x1 for backward go. A similar process can also be required for the activation gradient. But I believe that the thought course of does something comparable for typical customers to what the chat interface did. This incident resulted from a bug within the redis-py open supply library that exposed energetic user’s chat histories to other users in some circumstances, and moreover exposed payment data of roughly 1.2% of ChatGPT Plus service subscribers during a 9-hour window. 2. Platform Lock-In - Works finest with Google providers but lacks flexibility for customers outside the ecosystem. Jianzhi began operations by providing instructional content material merchandise and IT providers to increased schooling establishments. Learn to develop and deploy an intelligent Spring Boot app on Azure Container Apps using PetClinic, Langchain4j, Azure OpenAI, and Cognitive Services with chatbot integration. DeepSeek’s AI chatbot has gained important traction as a consequence of its unique benefits over rivals. Nasdaq futures plummeted almost 4%, with Nvidia alone shedding over 11% of its valuation in pre-market trading.

Nvidia - the dominant player in AI chip design and, as of this morning, the world’s third-largest firm by market cap - noticed its inventory value tumble after DeepSeek’s newest model demonstrated a stage of efficiency that many on Wall Street fear may problem America’s AI supremacy. Automating GPU Kernel Generation with DeepSeek-R1 and Inference Time Scaling - NVIDIA engineers successfully used the DeepSeek-R1 mannequin with inference-time scaling to robotically generate optimized GPU consideration kernels, outperforming manually crafted options in some cases. Hybrid 8-bit floating level (HFP8) training and inference for deep neural networks. Capabilities: GPT-4 (Generative Pre-educated Transformer 4) is a state-of-the-artwork language mannequin identified for its deep understanding of context, nuanced language generation, and multi-modal abilities (text and image inputs). CLUE: A chinese language language understanding evaluation benchmark. Mmlu-pro: A more strong and difficult multi-job language understanding benchmark. AGIEval: A human-centric benchmark for evaluating basis models. Language fashions are multilingual chain-of-thought reasoners. Cmath: Can your language mannequin cross chinese elementary school math take a look at? This strategy is challenging conventional methods in the AI area and shows innovation can thrive regardless of limitations. But even before that, we have now the unexpected demonstration that software program improvements will also be important sources of effectivity and decreased cost.

Nvidia - the dominant player in AI chip design and, as of this morning, the world’s third-largest firm by market cap - noticed its inventory value tumble after DeepSeek’s newest model demonstrated a stage of efficiency that many on Wall Street fear may problem America’s AI supremacy. Automating GPU Kernel Generation with DeepSeek-R1 and Inference Time Scaling - NVIDIA engineers successfully used the DeepSeek-R1 mannequin with inference-time scaling to robotically generate optimized GPU consideration kernels, outperforming manually crafted options in some cases. Hybrid 8-bit floating level (HFP8) training and inference for deep neural networks. Capabilities: GPT-4 (Generative Pre-educated Transformer 4) is a state-of-the-artwork language mannequin identified for its deep understanding of context, nuanced language generation, and multi-modal abilities (text and image inputs). CLUE: A chinese language language understanding evaluation benchmark. Mmlu-pro: A more strong and difficult multi-job language understanding benchmark. AGIEval: A human-centric benchmark for evaluating basis models. Language fashions are multilingual chain-of-thought reasoners. Cmath: Can your language mannequin cross chinese elementary school math take a look at? This strategy is challenging conventional methods in the AI area and shows innovation can thrive regardless of limitations. But even before that, we have now the unexpected demonstration that software program improvements will also be important sources of effectivity and decreased cost.

The latest increase in artificial intelligence offers us a captivating glimpse of future prospects, such because the emergence of agentic AI and highly effective multimodal AI systems which have also turn into more and more mainstream. The synthetic intelligence revolution is shifting at lightning pace, and one in all the biggest tales from last week underscores just how critical the expertise has turn out to be-not just for Silicon Valley, however for America’s national security and global competitiveness. Free DeepSeek online’s breakthrough isn’t only a financial story - it’s a nationwide safety issue. For added analysis of DeepSeek’s know-how, see this text by Sahin Ahmed or DeepSeek’s just-launched technical report. On Jan. 22, President Donald Trump publicly touted an AI joint enterprise, dubbed Stargate, that could see OpenAI, Oracle and SoftBank make investments $500 billion in U.S. President Donald Trump wasted no time responding, saying DeepSeek should be a "wake-up call" for Silicon Valley. ’s shaking Silicon Valley to its core.

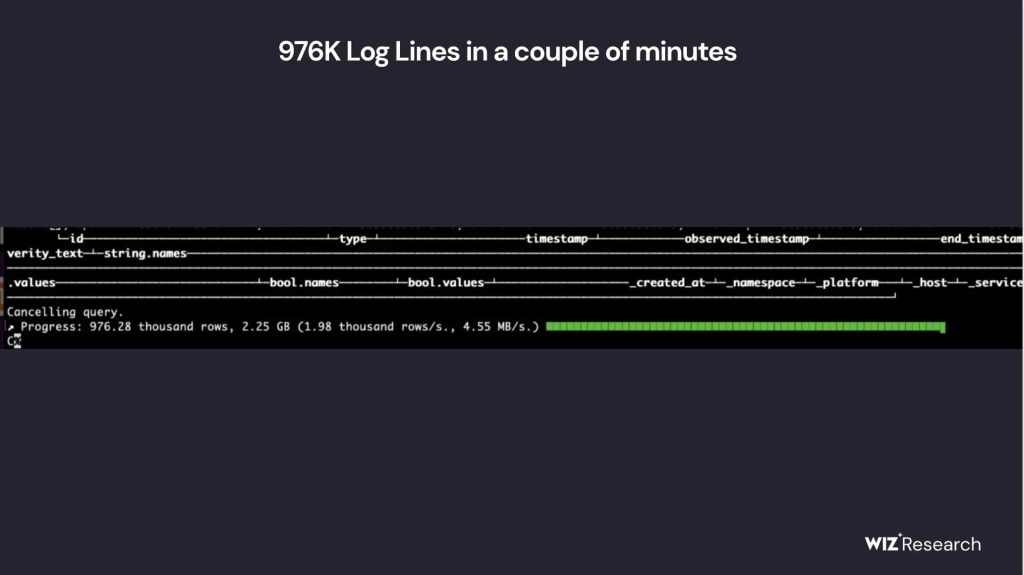

This sell-off indicated a way that the next wave of AI models may not require the tens of 1000's of prime-end GPUs that Silicon Valley behemoths have amassed into computing superclusters for the purposes of accelerating their AI innovation. The big scale presence of Indian immigrants in Silicon Valley can be testomony to India’s tech prowess - no doubt India will attempt in coming years to lure high Indian Silicon Valley IT folks to return home, to participate in India’s AI tech race. At the large scale, we train a baseline MoE model comprising roughly 230B total parameters on around 0.9T tokens. On the small scale, we prepare a baseline MoE mannequin comprising roughly 16B complete parameters on 1.33T tokens. Specifically, block-wise quantization of activation gradients results in mannequin divergence on an MoE model comprising approximately 16B total parameters, educated for around 300B tokens. We hypothesize that this sensitivity arises as a result of activation gradients are highly imbalanced amongst tokens, resulting in token-correlated outliers (Xi et al., 2023). These outliers cannot be effectively managed by a block-clever quantization method. Xia et al. (2023) H. Xia, T. Ge, P. Wang, S. Chen, F. Wei, and Z. Sui.

Here is more about DeepSeek Chat look at the web site.

- 이전글Diversity 'S Very Important When Picking Members About Your Book Club 25.03.21

- 다음글강남피부과 닥터쁘띠의원 강남점 25.03.21

댓글목록

등록된 댓글이 없습니다.