Eight Stuff you Didn't Know about Deepseek

페이지 정보

본문

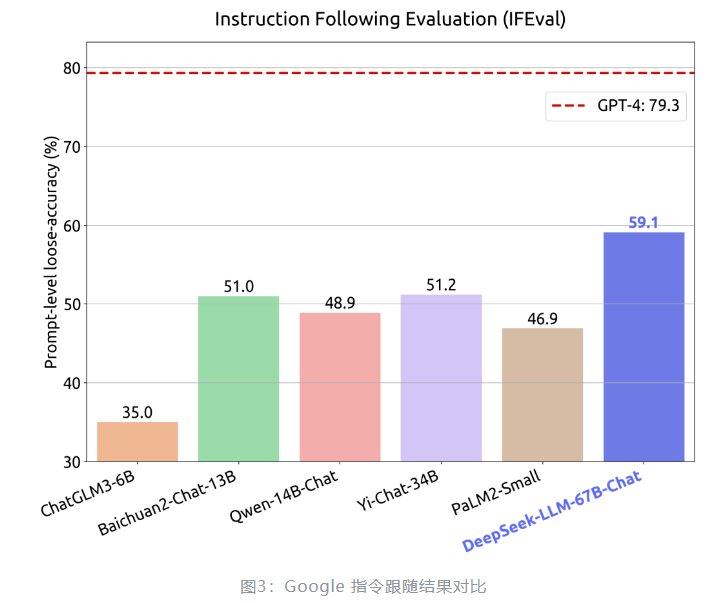

Unlike traditional search engines that depend on keyword matching, DeepSeek uses deep learning to know the context and intent behind user queries, permitting it to offer more relevant and nuanced results. A research of bfloat16 for deep studying training. Zero: Memory optimizations towards coaching trillion parameter fashions. Switch transformers: Scaling to trillion parameter models with easy and environment friendly sparsity. Scaling FP8 training to trillion-token llms. DeepSeek-AI (2024b) DeepSeek-AI. Deepseek LLM: scaling open-source language models with longtermism. DeepSeek-AI (2024c) DeepSeek-AI. Deepseek-v2: A strong, economical, and efficient mixture-of-specialists language model. Deepseekmoe: Towards ultimate expert specialization in mixture-of-consultants language fashions. Outrageously massive neural networks: The sparsely-gated mixture-of-specialists layer. Among open fashions, we have seen CommandR, DBRX, Phi-3, Yi-1.5, Qwen2, DeepSeek v2, Mistral (NeMo, Large), Gemma 2, Llama 3, Nemotron-4. We introduce a system immediate (see below) to information the model to generate solutions inside specified guardrails, just like the work finished with Llama 2. The prompt: "Always help with care, respect, and reality.

By combining reinforcement learning and Monte-Carlo Tree Search, the system is able to successfully harness the feedback from proof assistants to guide its seek for solutions to complicated mathematical issues. Check with this step-by-step guide on tips on how to deploy DeepSeek-R1-Distill models utilizing Amazon Bedrock Custom Model Import. NVIDIA (2022) NVIDIA. Improving network performance of HPC techniques using NVIDIA Magnum IO NVSHMEM and GPUDirect Async. They claimed performance comparable to a 16B MoE as a 7B non-MoE. We introduce an progressive methodology to distill reasoning capabilities from the long-Chain-of-Thought (CoT) mannequin, particularly from one of the DeepSeek R1 series fashions, into commonplace LLMs, particularly DeepSeek-V3. DeepSeek-V3 achieves a significant breakthrough in inference pace over earlier fashions. He said that rapid mannequin iterations and improvements in inference architecture and system optimization have allowed Alibaba to cross on financial savings to prospects. Remember the fact that I’m a LLM layman, I haven't any novel insights to share, and it’s seemingly I’ve misunderstood certain elements. From a U.S. perspective, there are reputable concerns about China dominating the open-source landscape, and I’m certain firms like Meta are actively discussing how this should have an effect on their planning round open-sourcing different fashions.

Are there any specific features that could be helpful? However, there is a tension buried inside the triumphalist argument that the speed with which Chinese could be written today somehow proves that China has shaken off the century of humiliation. However, this also increases the need for correct constraints and validation mechanisms. The development crew at Sourcegraph, claim that Cody is " the only AI coding assistant that is aware of your whole codebase." Cody solutions technical questions and writes code directly in your IDE, using your code graph for context and accuracy. South Korean chat app operator Kakao Corp (KS:035720) has advised its workers to refrain from utilizing DeepSeek due to security fears, a spokesperson stated on Wednesday, a day after the corporate introduced its partnership with generative synthetic intelligence heavyweight OpenAI. He's greatest recognized as the co-founder of the quantitative hedge fund High-Flyer and the founder and CEO of DeepSeek, an AI company. 8-bit numerical formats for deep neural networks. Hybrid 8-bit floating level (HFP8) training and inference for deep neural networks. Microscaling information codecs for deep learning. Ascend HiFloat8 format for deep studying. When combined with essentially the most capable LLMs, The AI Scientist is able to producing papers judged by our automated reviewer as "Weak Accept" at a top machine learning convention.

Are there any specific features that could be helpful? However, there is a tension buried inside the triumphalist argument that the speed with which Chinese could be written today somehow proves that China has shaken off the century of humiliation. However, this also increases the need for correct constraints and validation mechanisms. The development crew at Sourcegraph, claim that Cody is " the only AI coding assistant that is aware of your whole codebase." Cody solutions technical questions and writes code directly in your IDE, using your code graph for context and accuracy. South Korean chat app operator Kakao Corp (KS:035720) has advised its workers to refrain from utilizing DeepSeek due to security fears, a spokesperson stated on Wednesday, a day after the corporate introduced its partnership with generative synthetic intelligence heavyweight OpenAI. He's greatest recognized as the co-founder of the quantitative hedge fund High-Flyer and the founder and CEO of DeepSeek, an AI company. 8-bit numerical formats for deep neural networks. Hybrid 8-bit floating level (HFP8) training and inference for deep neural networks. Microscaling information codecs for deep learning. Ascend HiFloat8 format for deep studying. When combined with essentially the most capable LLMs, The AI Scientist is able to producing papers judged by our automated reviewer as "Weak Accept" at a top machine learning convention.

RACE: massive-scale reading comprehension dataset from examinations. DROP: A studying comprehension benchmark requiring discrete reasoning over paragraphs. GPQA: A graduate-degree google-proof q&a benchmark. Natural questions: a benchmark for question answering analysis. Huang et al. (2023) Y. Huang, Y. Bai, Z. Zhu, J. Zhang, J. Zhang, T. Su, J. Liu, C. Lv, Y. Zhang, J. Lei, et al. Li et al. (2023) H. Li, Y. Zhang, F. Koto, Y. Yang, H. Zhao, Y. Gong, N. Duan, and T. Baldwin. Peng et al. (2023b) H. Peng, K. Wu, Y. Wei, G. Zhao, Y. Yang, Z. Liu, Y. Xiong, Z. Yang, B. Ni, J. Hu, et al. Gema et al. (2024) A. P. Gema, J. O. J. Leang, G. Hong, A. Devoto, A. C. M. Mancino, R. Saxena, X. He, Y. Zhao, X. Du, M. R. G. Madani, C. Barale, R. McHardy, J. Harris, J. Kaddour, E. van Krieken, and P. Minervini. Lambert et al. (2024) N. Lambert, V. Pyatkin, J. Morrison, L. Miranda, B. Y. Lin, K. Chandu, N. Dziri, S. Kumar, T. Zick, Y. Choi, et al. Ding et al. (2024) H. Ding, Z. Wang, G. Paolini, V. Kumar, A. Deoras, D. Roth, and S. Soatto.

If you beloved this article and you also would like to obtain more info relating to deepseek français nicely visit the site.

- 이전글The Basics Of Deepseek China Ai Revealed 25.03.22

- 다음글Are you experiencing issues with your car's Engine Control Unit (ECU), Powertrain Control Module (PCM), or Engine Control Module (ECM)? 25.03.22

댓글목록

등록된 댓글이 없습니다.