It was Reported that in 2025

페이지 정보

본문

DeepSeek makes use of a different method to practice its R1 fashions than what's utilized by OpenAI. DeepSeek represents the newest problem to OpenAI, which established itself as an business chief with the debut of ChatGPT in 2022. OpenAI has helped push the generative AI industry ahead with its GPT family of fashions, as well as its o1 class of reasoning fashions. DeepSeek R1 is an open-supply AI reasoning model that matches industry-leading fashions like OpenAI’s o1 however at a fraction of the fee. It threatened the dominance of AI leaders like Nvidia and contributed to the most important drop for a single company in US stock market history, as Nvidia misplaced $600 billion in market worth. While there was a lot hype around the DeepSeek-R1 launch, it has raised alarms in the U.S., triggering issues and a inventory market promote-off in tech stocks. In March 2022, High-Flyer suggested certain purchasers that had been delicate to volatility to take their cash again as it predicted the market was extra prone to fall additional. Looking forward, we can anticipate even more integrations with emerging applied sciences corresponding to blockchain for enhanced security or augmented reality functions that would redefine how we visualize knowledge. Conversely, the lesser professional can develop into better at predicting other sorts of enter, and increasingly pulled away into one other region.

DeepSeek makes use of a different method to practice its R1 fashions than what's utilized by OpenAI. DeepSeek represents the newest problem to OpenAI, which established itself as an business chief with the debut of ChatGPT in 2022. OpenAI has helped push the generative AI industry ahead with its GPT family of fashions, as well as its o1 class of reasoning fashions. DeepSeek R1 is an open-supply AI reasoning model that matches industry-leading fashions like OpenAI’s o1 however at a fraction of the fee. It threatened the dominance of AI leaders like Nvidia and contributed to the most important drop for a single company in US stock market history, as Nvidia misplaced $600 billion in market worth. While there was a lot hype around the DeepSeek-R1 launch, it has raised alarms in the U.S., triggering issues and a inventory market promote-off in tech stocks. In March 2022, High-Flyer suggested certain purchasers that had been delicate to volatility to take their cash again as it predicted the market was extra prone to fall additional. Looking forward, we can anticipate even more integrations with emerging applied sciences corresponding to blockchain for enhanced security or augmented reality functions that would redefine how we visualize knowledge. Conversely, the lesser professional can develop into better at predicting other sorts of enter, and increasingly pulled away into one other region.

The mixed effect is that the consultants turn into specialised: Suppose two experts are each good at predicting a sure type of enter, however one is barely better, then the weighting perform would finally learn to favor the better one. DeepSeek's models are "open weight", which gives less freedom for modification than true open source software. Their product allows programmers to more simply combine varied communication methods into their software and deepseek packages. They minimized communication latency by extensively overlapping computation and communication, similar to dedicating 20 streaming multiprocessors out of 132 per H800 for only inter-GPU communication. To facilitate seamless communication between nodes in both A100 and H800 clusters, we make use of InfiniBand interconnects, known for their high throughput and low latency. I don’t get "interconnected in pairs." An SXM A100 node ought to have 8 GPUs linked all-to-all over an NVSwitch. In collaboration with the AMD crew, we've got achieved Day-One assist for AMD GPUs utilizing SGLang, with full compatibility for each FP8 and BF16 precision. ExLlama is appropriate with Llama and Mistral fashions in 4-bit. Please see the Provided Files table above for per-file compatibility.

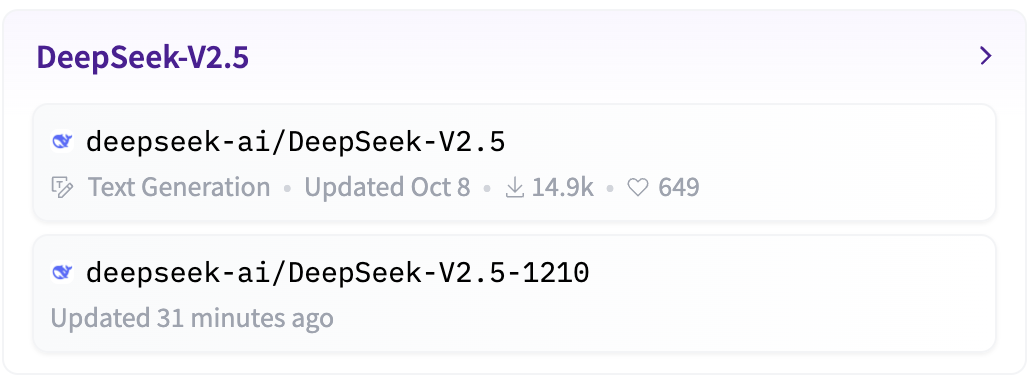

For instance, in healthcare settings the place speedy access to affected person information can save lives or enhance treatment outcomes, professionals benefit immensely from the swift search capabilities provided by DeepSeek. I bet I can find Nx points which have been open for a very long time that solely have an effect on a few folks, but I suppose since these points do not affect you personally, they do not matter? It can be used for speculative decoding for inference acceleration. LMDeploy, a versatile and high-performance inference and serving framework tailor-made for giant language models, Deepseek français now supports DeepSeek-V3. Deepseek Online chat’s language fashions, designed with architectures akin to LLaMA, underwent rigorous pre-training. DeepSeek, a Chinese AI firm, is disrupting the trade with its low-price, open source giant language models, challenging U.S. 2. Apply the same GRPO RL process as R1-Zero, adding a "language consistency reward" to encourage it to respond monolingually. Accuracy reward was checking whether or not a boxed reply is correct (for math) or whether a code passes assessments (for programming). Evaluation results on the Needle In A Haystack (NIAH) exams. On 29 November 2023, DeepSeek released the DeepSeek-LLM series of models. DeepSeek (深度求索), founded in 2023, is a Chinese company dedicated to creating AGI a actuality.

In key areas such as reasoning, coding, mathematics, and Chinese comprehension, LLM outperforms other language fashions. The LLM was also trained with a Chinese worldview -- a possible downside due to the nation's authoritarian authorities. The variety of heads doesn't equal the variety of KV heads, on account of GQA. Typically, this efficiency is about 70% of your theoretical maximum velocity resulting from several limiting factors reminiscent of inference sofware, latency, system overhead, and workload characteristics, which prevent reaching the peak speed. The system immediate asked R1 to mirror and confirm throughout considering. Higher clock speeds also enhance immediate processing, so purpose for 3.6GHz or more. I really needed to rewrite two business projects from Vite to Webpack because once they went out of PoC section and began being full-grown apps with more code and more dependencies, build was eating over 4GB of RAM (e.g. that's RAM limit in Bitbucket Pipelines). These large language fashions have to load fully into RAM or VRAM every time they generate a new token (piece of textual content). By spearheading the discharge of those state-of-the-artwork open-supply LLMs, DeepSeek AI has marked a pivotal milestone in language understanding and AI accessibility, fostering innovation and broader applications in the sector.

In key areas such as reasoning, coding, mathematics, and Chinese comprehension, LLM outperforms other language fashions. The LLM was also trained with a Chinese worldview -- a possible downside due to the nation's authoritarian authorities. The variety of heads doesn't equal the variety of KV heads, on account of GQA. Typically, this efficiency is about 70% of your theoretical maximum velocity resulting from several limiting factors reminiscent of inference sofware, latency, system overhead, and workload characteristics, which prevent reaching the peak speed. The system immediate asked R1 to mirror and confirm throughout considering. Higher clock speeds also enhance immediate processing, so purpose for 3.6GHz or more. I really needed to rewrite two business projects from Vite to Webpack because once they went out of PoC section and began being full-grown apps with more code and more dependencies, build was eating over 4GB of RAM (e.g. that's RAM limit in Bitbucket Pipelines). These large language fashions have to load fully into RAM or VRAM every time they generate a new token (piece of textual content). By spearheading the discharge of those state-of-the-artwork open-supply LLMs, DeepSeek AI has marked a pivotal milestone in language understanding and AI accessibility, fostering innovation and broader applications in the sector.

- 이전글A Few Tips For The First Time Home Buyer 25.03.23

- 다음글клининг санкт петербург 25.03.23

댓글목록

등록된 댓글이 없습니다.