Life, Death And Deepseek

페이지 정보

본문

Where can I get assist if I face issues with DeepSeek Windows? It’s self hosted, can be deployed in minutes, and works directly with PostgreSQL databases, schemas, and tables with out extra abstractions. Mathesar is a web application that makes working with PostgreSQL databases both simple and powerful. DeepSeek API makes it straightforward to integrate superior AI models, together with DeepSeek R1, into your utility with acquainted API formats, enabling easy development. Configuration: Configure the applying as per the documentation, which may involve setting environment variables, configuring paths, and adjusting settings to optimize performance. This minimizes performance loss with out requiring huge redundancy. DeepSeek's innovation right here was creating what they call an "auxiliary-loss-Free DeepSeek Chat" load balancing strategy that maintains environment friendly skilled utilization without the same old performance degradation that comes from load balancing. Free DeepSeek Ai Chat cracked this drawback by developing a intelligent system that breaks numbers into small tiles for activations and blocks for weights, and strategically uses high-precision calculations at key points within the network.

Where can I get assist if I face issues with DeepSeek Windows? It’s self hosted, can be deployed in minutes, and works directly with PostgreSQL databases, schemas, and tables with out extra abstractions. Mathesar is a web application that makes working with PostgreSQL databases both simple and powerful. DeepSeek API makes it straightforward to integrate superior AI models, together with DeepSeek R1, into your utility with acquainted API formats, enabling easy development. Configuration: Configure the applying as per the documentation, which may involve setting environment variables, configuring paths, and adjusting settings to optimize performance. This minimizes performance loss with out requiring huge redundancy. DeepSeek's innovation right here was creating what they call an "auxiliary-loss-Free DeepSeek Chat" load balancing strategy that maintains environment friendly skilled utilization without the same old performance degradation that comes from load balancing. Free DeepSeek Ai Chat cracked this drawback by developing a intelligent system that breaks numbers into small tiles for activations and blocks for weights, and strategically uses high-precision calculations at key points within the network.

Dynamic Routing Architecture: A reconfigurable network reroutes information round defective cores, leveraging redundant pathways and spare cores. NVIDIA (2022) NVIDIA. Improving community performance of HPC methods utilizing NVIDIA Magnum IO NVSHMEM and GPUDirect Async. Cerebras Systems has wrote an article on semiconductor manufacturing by reaching viable yields for wafer-scale processors regardless of their huge dimension, difficult the longstanding belief that larger chips inherently undergo from decrease yields. Abstract: Reinforcement studying from human suggestions (RLHF) has grow to be an vital technical and storytelling software to deploy the most recent machine learning systems. Reinforcement learning (RL): The reward model was a process reward model (PRM) trained from Base in response to the Math-Shepherd methodology. Tensorgrad is a tensor & deep studying framework. MLX-Examples contains a wide range of standalone examples utilizing the MLX framework. Nvidia H100: This 814mm² GPU incorporates 144 streaming multiprocessors (SMs), however solely 132 are active in industrial merchandise(1/12 is defective). To be particular, throughout MMA (Matrix Multiply-Accumulate) execution on Tensor Cores, intermediate results are accumulated using the restricted bit width. There is an excellent weblog put up(albeit a bit long) that particulars about a few of the bull, base and bear circumstances for NVIDIA by going via the technical landscape, rivals and what that may imply and appear to be in future for NVIDIA.

Dynamic Routing Architecture: A reconfigurable network reroutes information round defective cores, leveraging redundant pathways and spare cores. NVIDIA (2022) NVIDIA. Improving community performance of HPC methods utilizing NVIDIA Magnum IO NVSHMEM and GPUDirect Async. Cerebras Systems has wrote an article on semiconductor manufacturing by reaching viable yields for wafer-scale processors regardless of their huge dimension, difficult the longstanding belief that larger chips inherently undergo from decrease yields. Abstract: Reinforcement studying from human suggestions (RLHF) has grow to be an vital technical and storytelling software to deploy the most recent machine learning systems. Reinforcement learning (RL): The reward model was a process reward model (PRM) trained from Base in response to the Math-Shepherd methodology. Tensorgrad is a tensor & deep studying framework. MLX-Examples contains a wide range of standalone examples utilizing the MLX framework. Nvidia H100: This 814mm² GPU incorporates 144 streaming multiprocessors (SMs), however solely 132 are active in industrial merchandise(1/12 is defective). To be particular, throughout MMA (Matrix Multiply-Accumulate) execution on Tensor Cores, intermediate results are accumulated using the restricted bit width. There is an excellent weblog put up(albeit a bit long) that particulars about a few of the bull, base and bear circumstances for NVIDIA by going via the technical landscape, rivals and what that may imply and appear to be in future for NVIDIA.

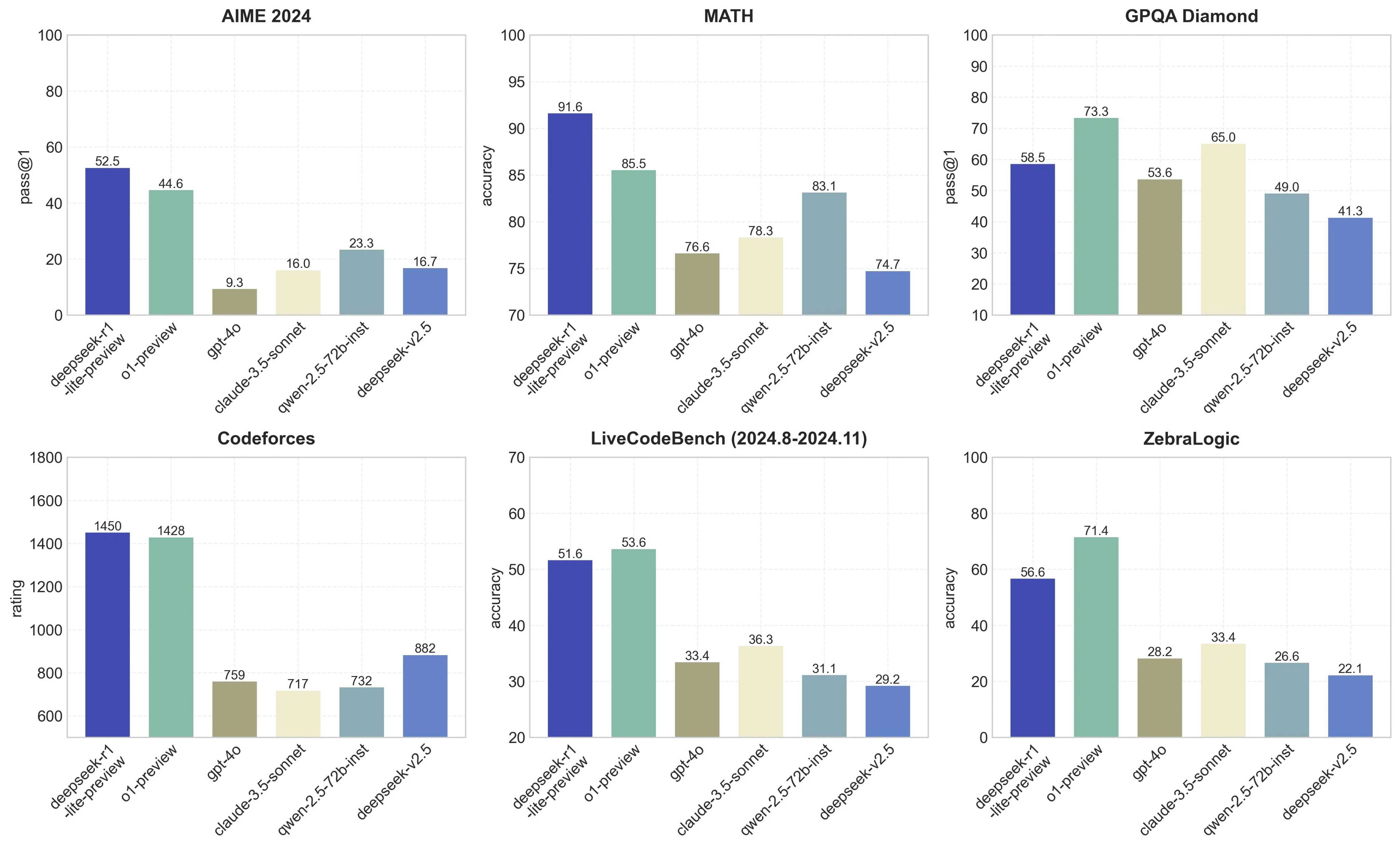

Skipping SFT: Applying RL directly to the base model. 1. Download the model weights from Hugging Face, and put them into /path/to/DeepSeek-V3 folder. Those that use the R1 model in DeepSeek’s app also can see its "thought" process because it answers questions. Download and install the app on your gadget. The following set of new languages are coming in an April software update. We then set the stage with definitions, problem formulation, knowledge collection, and other frequent math used within the literature. Unlike other labs that practice in excessive precision and then compress later (dropping some quality in the process), Free DeepSeek's native FP8 strategy means they get the large reminiscence financial savings without compromising performance. PDFs (even ones that require OCR), Word information, etc; it even permits you to submit an audio file and mechanically transcribes it with the Whisper model, cleans up the resulting textual content, after which computes the embeddings for it. To avoid wasting computation, these embeddings are cached in SQlite and retrieved if they've already been computed before. Note: Best results are shown in bold. Note: All models are evaluated in a configuration that limits the output length to 8K. Benchmarks containing fewer than 1000 samples are examined a number of times using varying temperature settings to derive robust ultimate outcomes.

Then, depending on the character of the inference request, you can intelligently route the inference to the "skilled" models within that assortment of smaller fashions which might be most capable of answer that query or resolve that process. The growing usage of chain of thought (CoT) reasoning marks a new period for big language models. Transformer language mannequin coaching. Bidirectional language understanding with BERT. They have one cluster that they are bringing online for Anthropic that options over 400k chips. You are now able to check in. With a fast and straightforward setup process, you will instantly get access to a veritable "Swiss Army Knife" of LLM related instruments, all accessible through a convenient Swagger UI and ready to be integrated into your own purposes with minimal fuss or configuration required. Most LLMs write code to entry public APIs very well, but battle with accessing non-public APIs. Well, as a substitute of trying to battle Nvidia head-on through the use of a similar approach and trying to match the Mellanox interconnect technology, Cerebras has used a radically revolutionary method to do an finish-run around the interconnect problem: inter-processor bandwidth becomes much less of a problem when all the pieces is operating on the identical super-sized chip.

If you have any sort of inquiries pertaining to where and how to use DeepSeek Chat, you can call us at our web-page.

- 이전글When Deepseek Ai Competitors is good 25.03.23

- 다음글구디노래방 6년간 활동을 중단 25.03.23

댓글목록

등록된 댓글이 없습니다.